NSX-T Design Guide for Avi Vantage

Overview

VMware NSX-T provides an agile software-defined infrastructure to build cloud-native application environments.

NSX-T is focused on providing networking, security, automation, and operational simplicity for emerging application frameworks and architectures that have heterogeneous endpoint environments and technology stacks. NSX-T supports cloud-native applications, bare metal workloads, multi-hypervisor environments, public clouds, and multiple clouds.

To know more about VMware NSX-T, refer to the VMware NSX-T documentation.

This guide describes the design details of the Avi - NSX-T integration.

Architecture

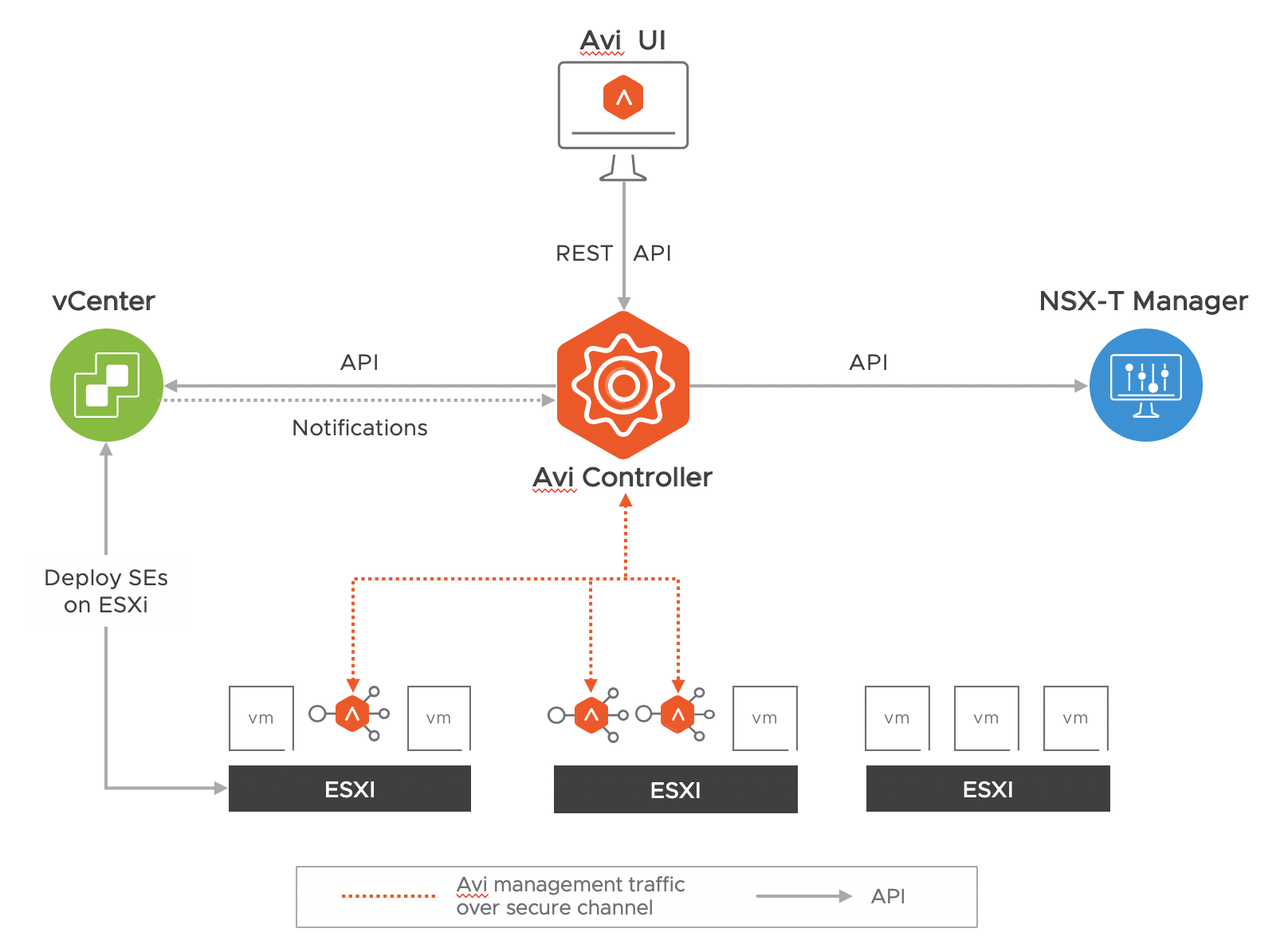

The solution comprises of the Avi Controller which uses APIs to interface with the NSX-T manager and vCenter to discover the infrastructure. It also manages the lifecycle and network configuration of Service Engines (SE). The Avi Controller provides the control plane and management console for users to configure the load balancing for their applications and the Service Engine provide a distributed and elastic load balancing fabric.

Note: The Avi Controller uploads the SE OVA image to the content library on vCenter and uses vCenter APIs to deploy the SE VMs. A Content library must be created by vCenter admin, before cloud configuration. Click here to know more.

Protocol Port Requirements

The table below shows the protocols and ports required for the integration:

| Source | Destination | Protocol | Port |

|---|---|---|---|

| The Avi Controller management IP and cluster VIP | NSX-T Manager management IP | TCP | 443 |

| The Avi Controller management IP and cluster VIP | vCenter server management IP | TCP | 443 |

To know more about the protocols and ports used by the Avi Controller and Service Engines, refer to the Protocol Ports Used by Avi Vantage for Management Communication article.

NSX admins must manage the NSX edge policies, if NSX-T gateway firewall is enabled.

Supportability

For NSX-T - Avi Support Matrix, click here.

Load Balancer Topologies

Avi currently supports load balancing only in an NSX-T transport zone of type overlay. The SE supports only one arm mode of deployment in NSX-T environments i.e. for a virtual service the Client to VIP traffic and SE to backend server traffic both use the same SE data interface. An SE VM has nine data interfaces so it can connect to multiple logical segments but each one will be in a different VRF and hence will be isolated from all other interfaces.

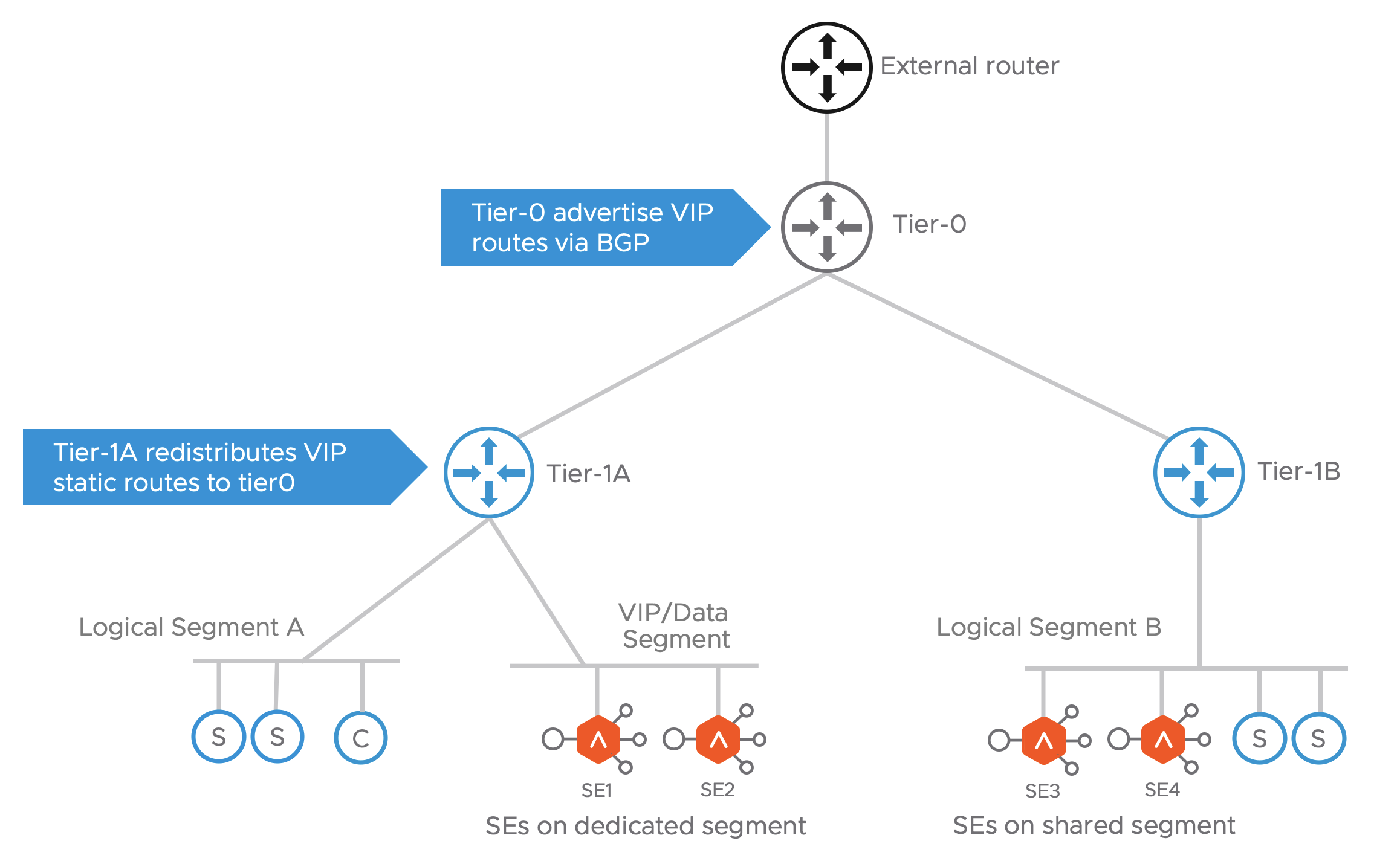

From shown in the diagram, two topologies are possible for SE deployment:

- SEs on dedicated Logical Segment:

- Allows to manage IP address assignment separately for SE interfaces

- In the current version, this segment must be created on NSX-T prior to adding it to cloud configuration on Avi.

- SEs on shared Logical Segment:

- SE interfaces shares the same address space as the server VMs on same logical Segment.

Note: Only logical Segments connected to tier-1 router are supported. The cloud automation for NSX-T integration does not support placing SEs on logical segments directly connected to tier-0 routers.

The following topologies is not supported:

| SE Data | VIP Subnet | Client Subnet |

|---|---|---|

| Seg A-T1a-T0 | Seg B-T1b-T0 | Seg B-T1b-T0 |

Static route is configured on T1a.Therefore, only T1a AND T0 can do Proxy ARP . T1b does not have that static route so cannot proxy arp for the VIP.

VIP Networking

For the virtual services placed on these SEs the VIP can belong to the subnet of the logical segment it is connected to or any other unused subnet. Once the virtual service is placed on the SE, the Avi Controller updates the VIP static routes on the tier-1 router associated with the logical segment selected for the virtual service placement. The NSX admin is expected to configure the tier-1 router to redistribute these static routes with tier-0. For north-south reachability of the VIP, admin should configure the tier-0 to advertise the VIP routes to external router via BGP.

Note: The number of VIPs that can be configured per Tier-1 is subject to the max static routes supported by the NSX Manager on the respective Tier-1 gateways. For information on the logical routing limits, refer NSX-T Data Center Configuration Limits.

There are two traffic scenarios as discussed below:

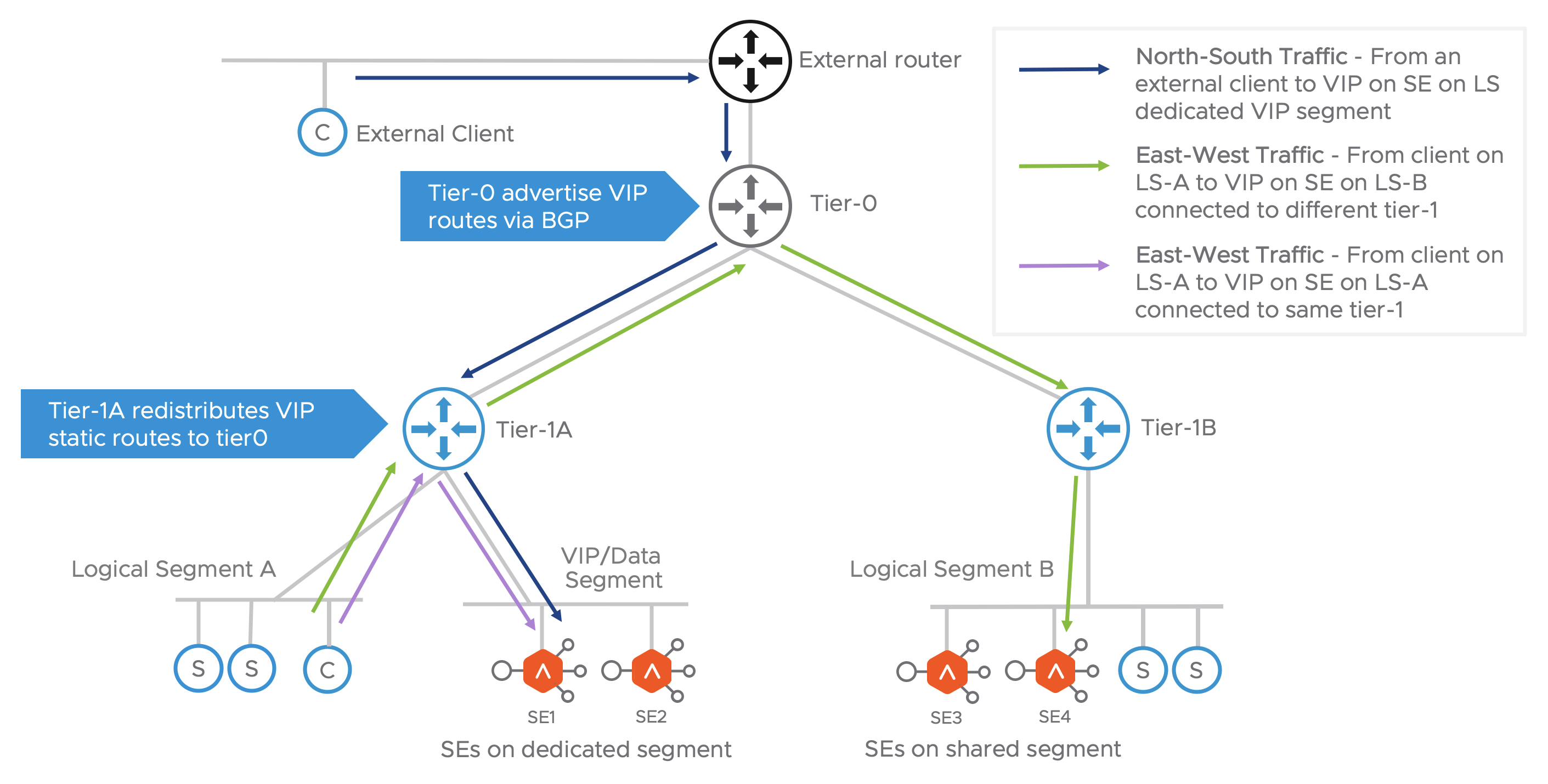

North-South Traffic

As shown in the figure above, when an external client sends request to the VIP it gets routed from the external router to tier-0 which forwards it to the correct tier-1, which routes it to the VIP on the SE.

East-West Traffic

For a client on the NSX overlay trying to reach a VIP, the request is sent to its default gateway on the directly connected tier-1. Depending on where the VIP is placed there can be 2 sub-scenarios:

- VIP on the SE connected to a different tier-1: The traffic is routed to tier-0 which forwards the traffic to the correct tier-1 router. This then routes the traffic to the SE.

- VIP on the SE connected to the same tier-1: The traffic is routed to the SE on same tier-1.

HA Modes and Scale Out

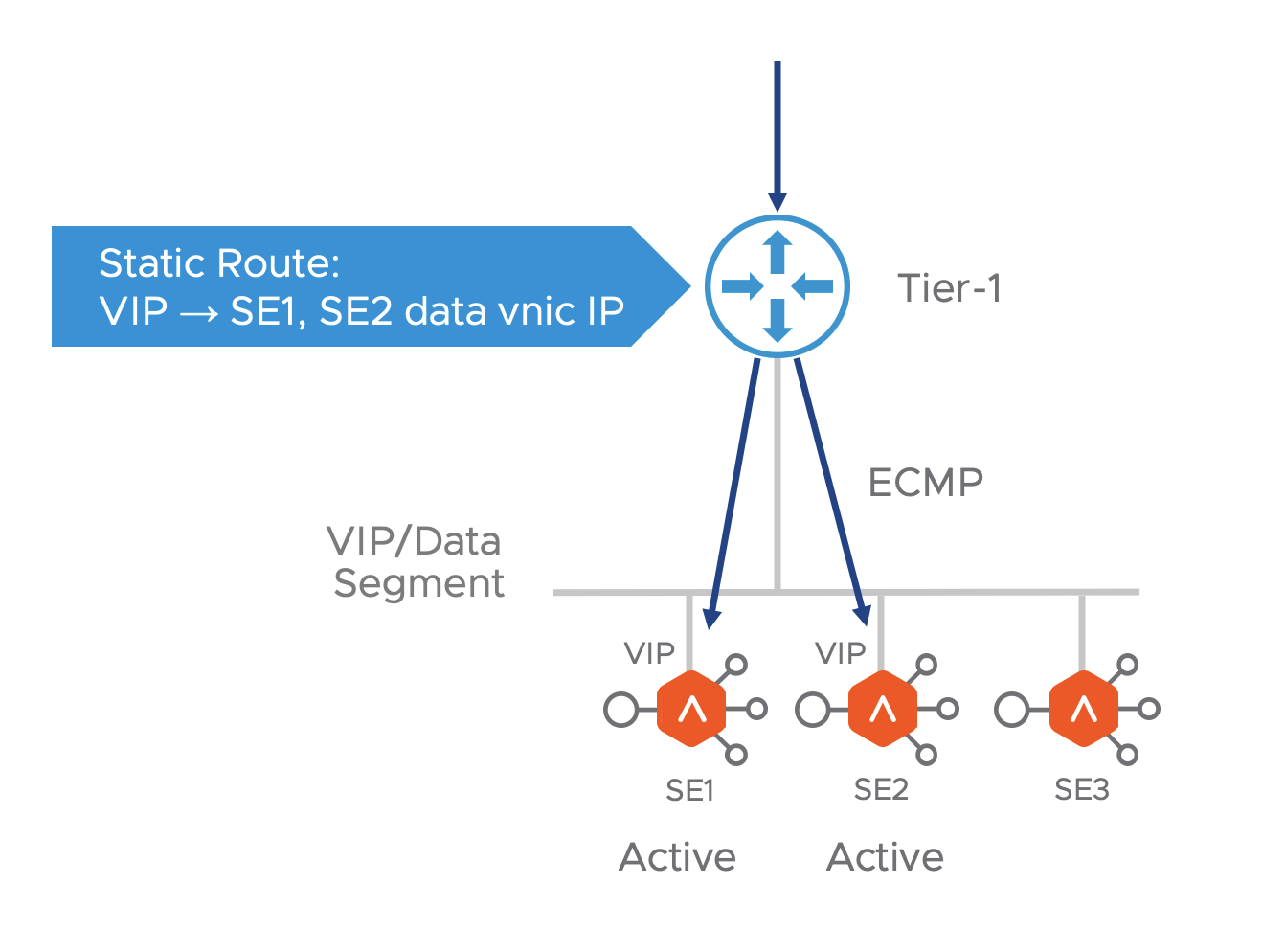

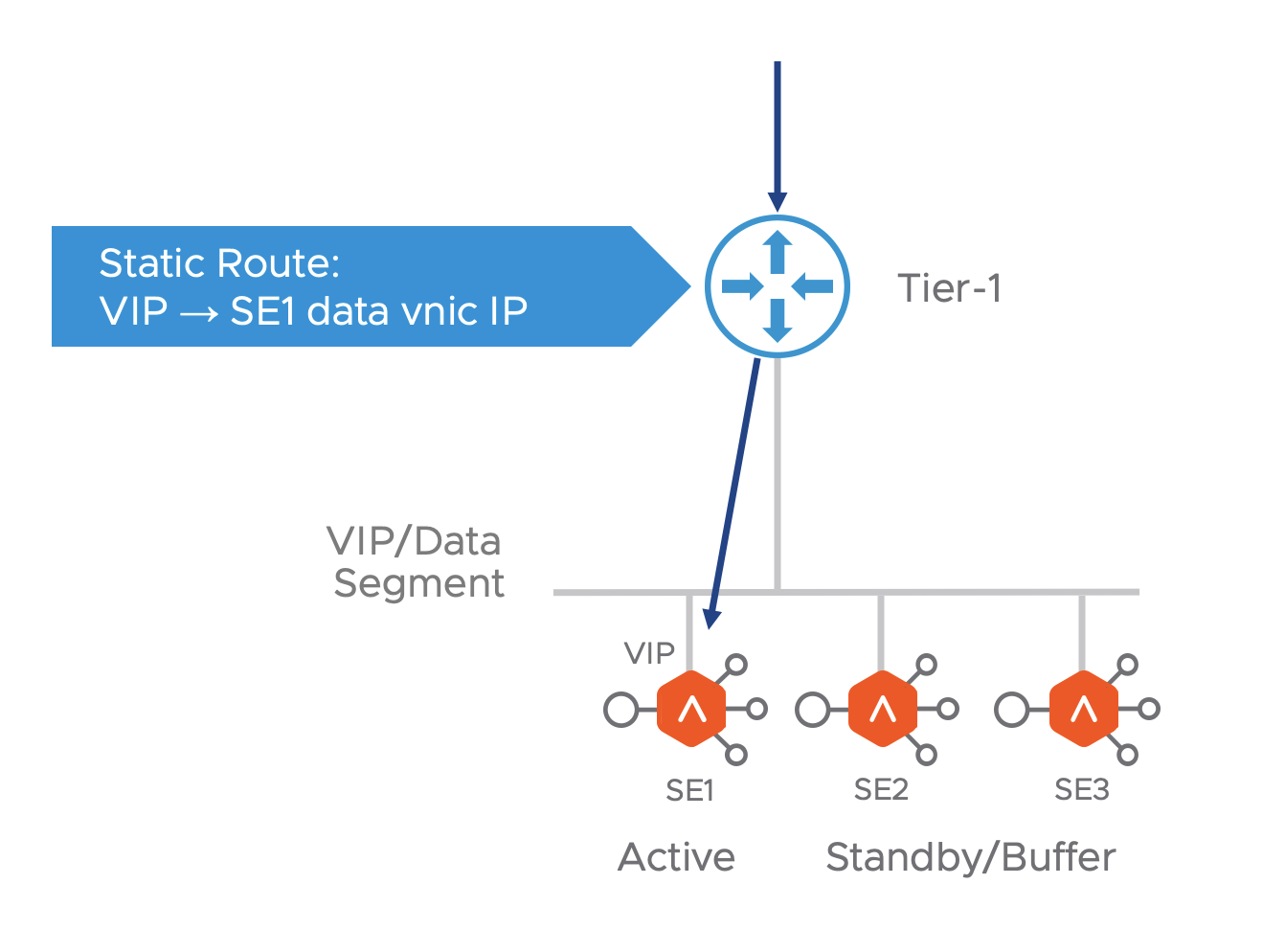

All HA modes (Active-Active, M+N and Active-Standby) are supported in NSX-T environment. When a VIP is placed on an SE, the Avi controller adds a static route for it on the tier-1 router, with the SE’s data interface as the next hop.

In the case of Active-Active and M+N HA modes, when the virtual service is scaled out, the Avi controller adds equal cost next hops pointing to each SE where the the virtual service is placed. The tier-1 spreads out the incoming connections over the SEs, using Equal Cost Multi-Pathing (ECMP).

In case of Active-Standby where only one SE is Active or M+N HA mode with virtual service is not scaled out, the Avi controller programs route to the active SE only. ECMP is not required here.

Management Network

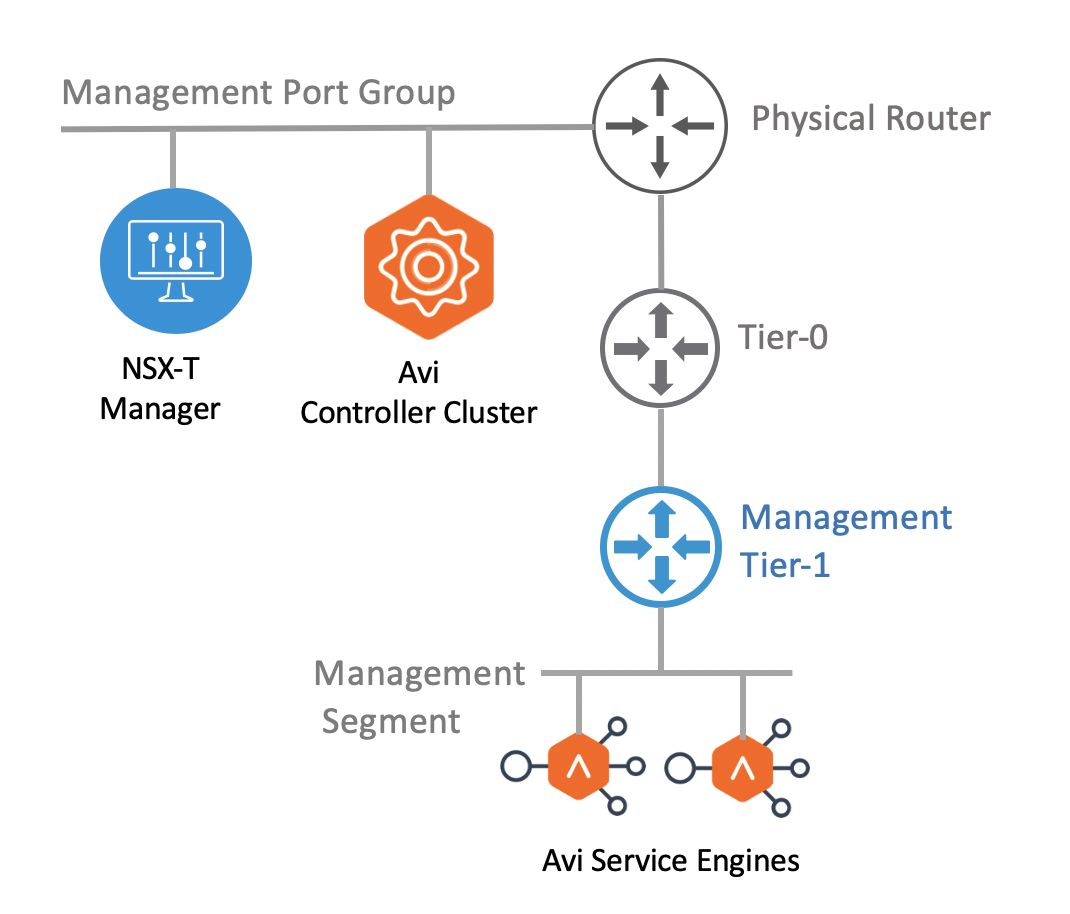

The Avi Controller cluster VMs should be deployed adjacent to the NSX-T Manager, connected to the management port group. It is recommended to have a dedicated tier-1 gateway and logical segment for the Avi SE management.

The network first network interface of all SE VMs are connected to the management segment. The management IP address of the SEs must be reachable from the Avi Controller. All Connected Segments & Service Ports” and “All Static Routes” to the tier-0. Tier-0 must advertise the learned routes to external router using BGP.

NSX-T Cloud Configuration Model

The point of integration in Avi, with any infrastructure, is called a cloud. For NSX-T environment, an NSX-T cloud has to be configured. To know how to configure an NSX-T cloud, refer to Avi Integration with NSX-T.

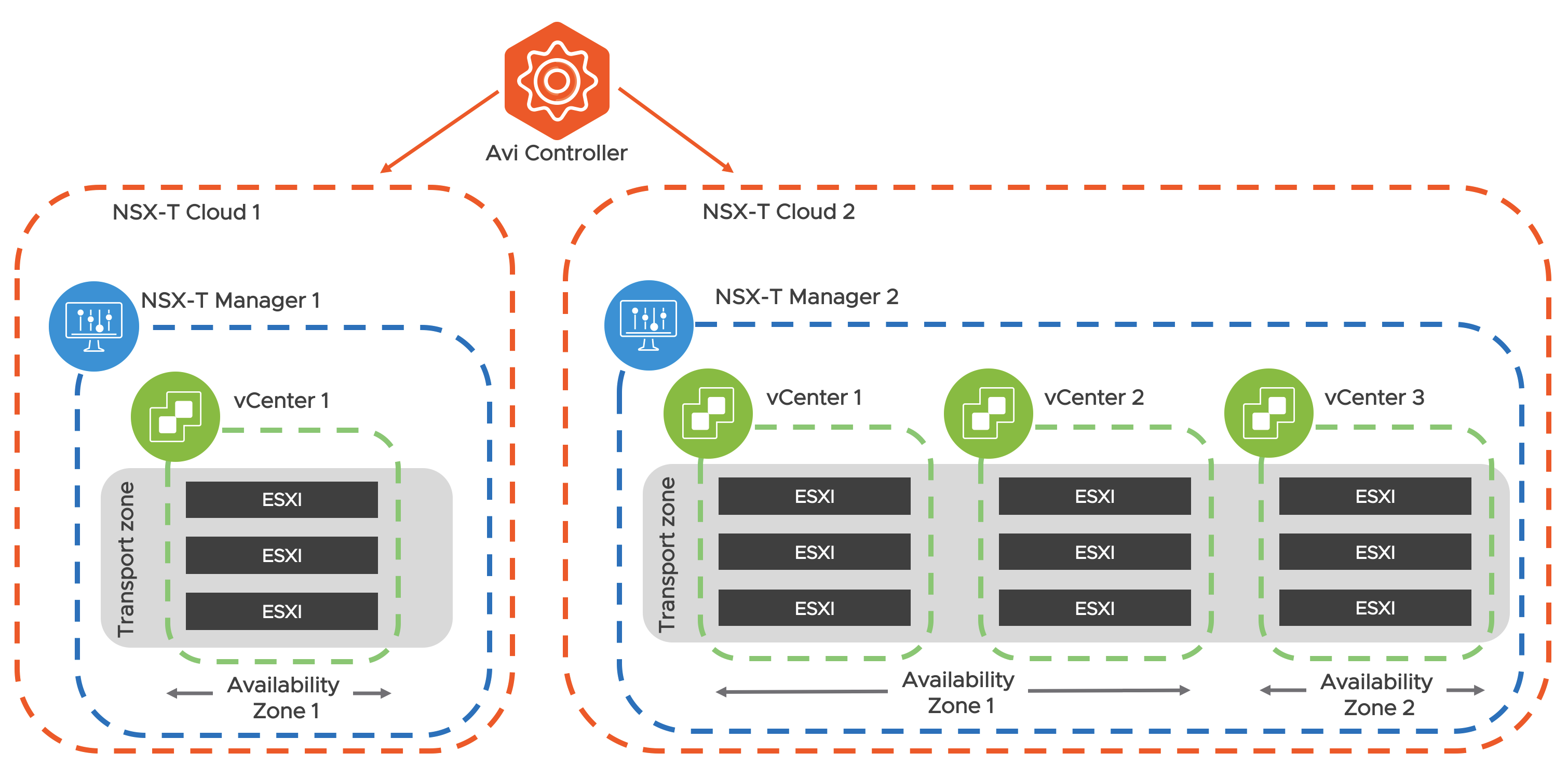

An NSX-T cloud is defined by an NSX-T manager and a transport zone. If an NSX-T manger has multiple transport zones, each will map to a new NSX-T cloud. To manage load balancing for multiple NSX-T environments each NSX-T manager will map to a new NSX-T cloud.

NSX-T cloud also requires the following configurations:

vCenter Objects

Each NSX-Cloud can have one or more vCenters associated to it. vCenter objects must be configured on Avi for all the vCenter compute managers added to the NSX-T that has ESXi hosts that belong to the transport zone configured in the NSX-T cloud.

Select a content library where Avi controller will upload the SE OVA.

Network Configurations

The NSX-T cloud requires two types of network configurations:

Management Network: The logical segment to be used for management connectivity of the SE VMs has to be selected.

VIP Placement Network: The Avi Controller will not sync all the NSX-T logical segments. The tier-1 router and one connected logical segment must be selected as the VIP network. Only one VIP network is allowed per tier-1 router. This can be repeated if there are multiple tier-1 routers. Only the selected VIP Logical Segments will be synced as Network objects on Avi.

VRF Contexts

Avi automatically creates a VRF context for every tier-1 router selected during VIP network configuration. This is done because the logical Segments connected to different tier-1s can have same subnet. A VRF has to be selected while creating a virtual service so that the VIPs are placed on the correct local segment and VIP routes gets configured on the correct tier-1 router.

Pool Configuration

For a given virtual service, the logical segment of the pool servers and the logical segment of the VIP must belong to the same tier-1 router. If the VIP and the pool are connected to different tier-1, the traffic may pass through the tier-0 and hence through the NSX-T edge (depending on the NSX-T services configured by admin). This reduces the data path performance and hence must be avoided.

The pool members can be configured in two ways:

-

NSGroup: Servers can be added to an NSGroup in NSX-T manager and the same can be selected in the pool configuration. All the IP addresses that the NSGroup resolves to will be added as pool members on Avi. Avi also polls for changes to NSGroup (default is every 5 min) so if the NSGroup has dynamic membership or a member is manually added/removed Avi pool will eventually sync the new server IPs.

-

IP Addresses: Static list of IP addresses can be specified as pool members.

Security Automation

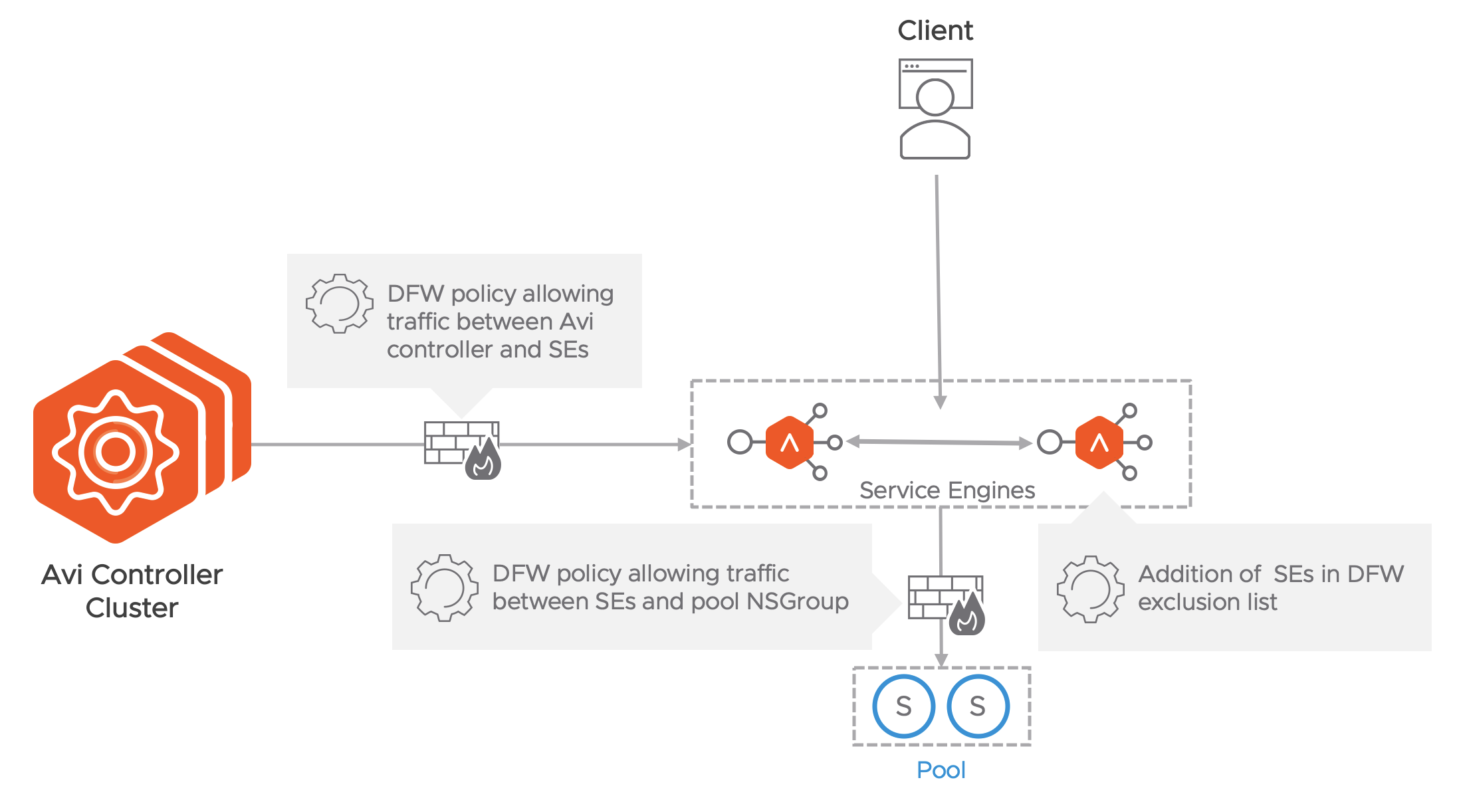

Creating NSGroups for SEs and Avi Controller management IPs is automated by the NSX-T cloud.

Execute the following operations manually:

- Add the SE NSGroup to the exclusion list. This is required to allow cross SE traffic and prevent packet drop due to stateful DFW when asymmetric routing of application traffic happens.

- Create DFW policy to allow management traffic from SE NSGroup to Avi Controller NSGroup.

Note: The SE initiates the TCP connection to the Controller management IP - For every Virtual Service configured on Avi, create a DFW policy to allow traffic from SE NSGroup to the NSGroup/IP group configured as pool.

Note: The NSX-T cloud connector creates and manages the NSGroups for different Avi objects. But the DFW rule creation is not supported currently. Add the service engine NSGroup to exclude the list before virtual service creation.

Since the SEs are in the exclusion list, DFW cannot be enforced on the Client to VIP traffic. This can be secured by configuring the network security policies on the virtual service on Avi. If the NSX-T gateway firewall is enabled, edge policies must be manually configured to allow VIP traffic form external clients.

If Preserve Client IP Address is enabled for a virtual service, then client IP will be sent to the server. This implies:

-

The DFW rule configured for the backend pool connectivity should allow Client IP to pass through DFW rule

-

The health monitor is also sent from the SE interface to pool that should also be allowed in DFW rule

Proxy Arp for VIP on Tier-1 and Tier-0

Prior to Avi Vantage version 20.1.3, if the client and the VIP are within the same segment, in the same subnet, ARP (Address Resolution Protocol) did not work because the client and VIP were in the same L2 Domain, resulting in no traffic flow.

Starting with Avi Vantage version 20.1.3, along with NSX-T 3.1.0, the proxy ARP functionality is available on both Tier-0 and Tier-1 gateways for Avi LB VIPs.

Consider the following use cases:

-

Proxy ARP on Tier-0 Gateway: The client and VIP are in the same segment, but the client is reaching the VIP through the tier 0. Proxying of the ARP or the VIP will be done by tier 0 to the external clients.

-

(Proxy ARP on Tier-1 Gateway): If the client is local, (on the same tier 1), tier 1 does the proxy ARP for the VIP. Both the SE ad the tier 1 will respond, if they are attached.

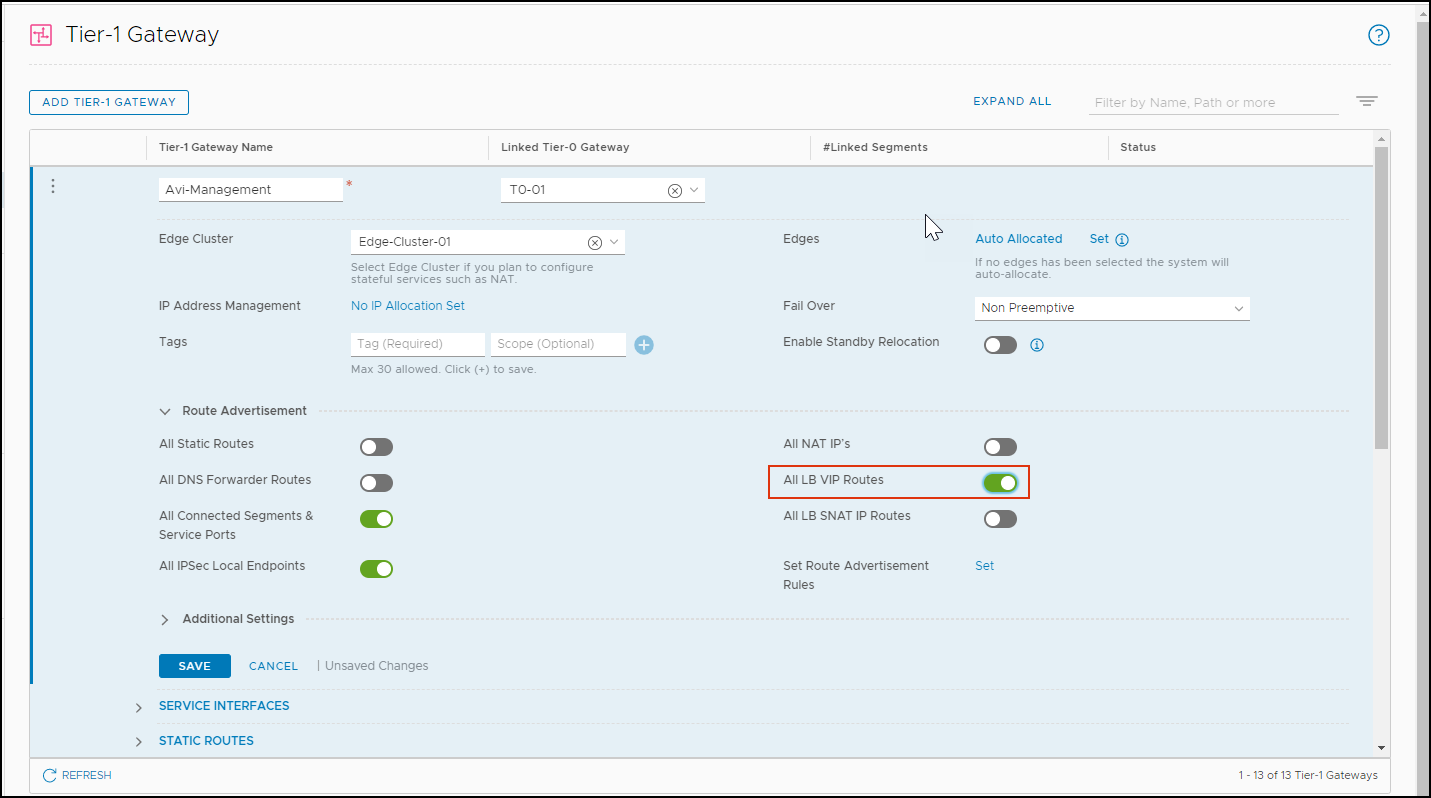

For Tier 0 to do the proxy ARP, enable the All LB VIP Routes for Tier-1:

-

From the NSX-T manager, navigate to Networking > Tier-1 Gateway.

-

Add a Tier Gateway or edit an existing one.

-

Under Route Advertisement, enable All LB VIP Routes.

-

Click on Save.

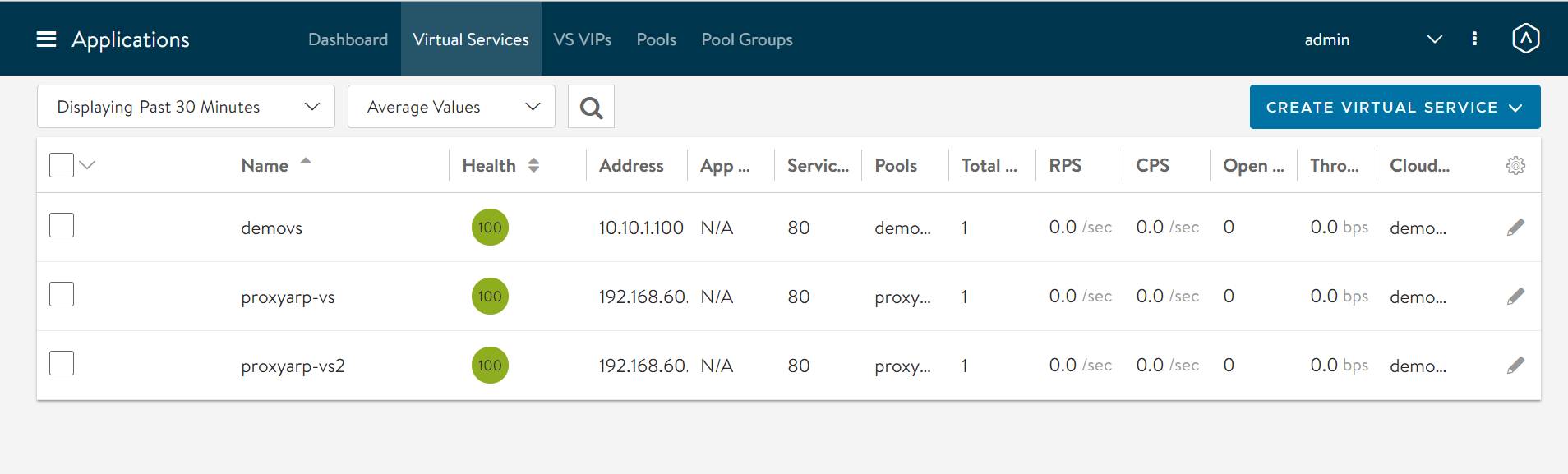

From the image below, there are two proxy ARP virtual services are created in two different clouds.

In these virtual services,

- In ProxyVS1, the client and the VIP are in the same segment, the DataNIC is the same (seg50a)

- In proxyVS2, the client and the VIP are in the same segment (seg50a), but the SE DataNIC is different (seg51a)

Limitations

The following are the limitations to the configurations allowed in Avi Vantage release 20.1.1:

- Only one NSX-T cloud is supported per Avi controller cluster

- Only one vCenter can be associated with an NSX-T cloud

- Only overlay type Transport zones are supported

Note: Starting with Avi Vantage release 20.1.3, multiple NSX-T clouds (maximum of 5) are supported, and multiple vCenters can be associated per NSX-T cloud.

Document Revision History

| Date | Change Summary |

|---|---|

| December 22, 2020 | Updated content for proxy Arp for VIP on Tier-1 and Tier-0 (Version 20.1.3) |

| July 30, 2020 | Published the Design Guide for NSX-T Integration with Avi Vantage (Version 20.1.1) |

| May 18, 2020 | Published the Design Guide for NSX-T Integration with Avi Vantage (Tech Preview) |