Controller Cluster IP in GCP

Overview

Avi Vantage can run with a single Avi Controller (single-node deployment) or with a three-node Avi Controller cluster. In a deployment that uses a single Avi Controller, the Avi Controller performs the administrative functions as well as all analytics, data gathering, and processing.

Adding two additional nodes to create a three-node cluster provides node-level redundancy for the Avi Controller and also maximizes the performance for CPU-intensive analytics functions. In this case, one node is the leader and performs the administrative functions. The other two nodes are followers and they perform data collection for analytics, in addition to being on standby as backups for the leader.

This article explains the cluster virtual IP (VIP) configuration for Avi Controller in GCP environment. It applies to docker based as well as GCP full-access based controller deployments.

Note: Configuring cluster VIP with external IP is not supported for Avi Vantage versions prior to 20.1.8.

Prerequisites

-

Ensure that the default service account associated with the Avi Controller virtual machines have appropriate permissions to be able to configure the Avi Controller cluster IP on its interfaces. Use the cluster_vip_role.yaml file to configure a role with permissions required for configuring the Controller cluster IP.

-

Choose a free IP in the same subnet for the cluster IP.

-

Set up firewall rules to allow access to all the Controller ports mentioned here via the cluster IP explicitly.

Configuring Controller Cluster IP

To configure the Controller cluster IP,

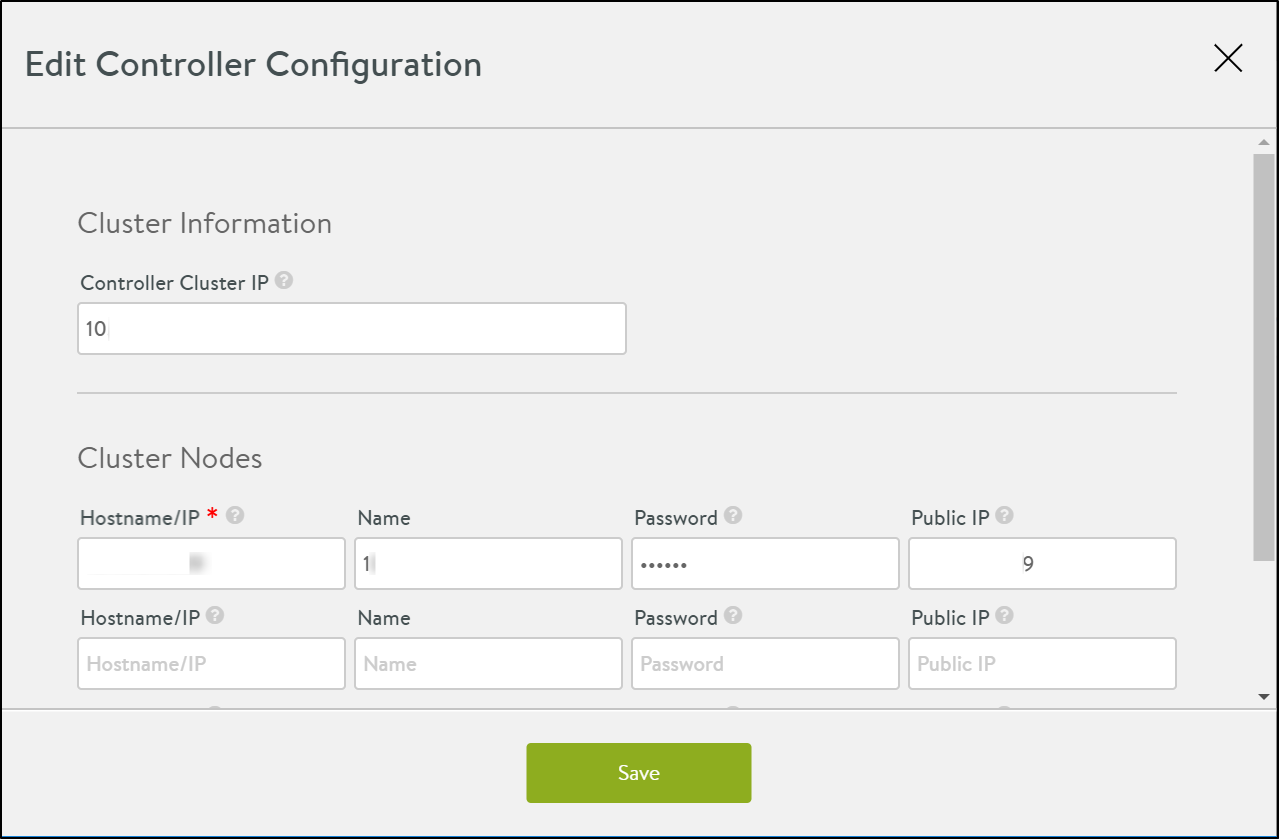

- Navigate to Administration > Controller > Edit.

- Enter the virtual IP address in the field Controller Cluster IP.

- Under Cluster Nodes, enter the Hostname/IP, Name, Password, and the Public IP address or hostname of the Controller VM.

The Edit Controller Configuration screen is as shown below:

Note: In case of an existing two-node or three-node cluster configuration, to change the Controller cluster IP from the cluster IP configuration screen, first delete the existing cluster IP and then add a new one.

The Controller cluster IP gets programmed as a sub-interface on the leader nodes primary NIC, and should be in the same subnet as the controller nodes. Whenever there is a failover this IP is reprogrammed on the new leaders primary NIC.

As shown in the example below, 10.146.3.2 is the Controller cluster IP programmed on a leader in a three-node cluster.

admin@demo-gcp-fa-cluster-node-1:~$ ip a

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1460 qdisc mq state UP group default qlen 1000

link/ether 42:01:0a:92:03:1c brd ff:ff:ff:ff:ff:ff

inet 10.146.3.28/32 brd 10.146.3.28 scope global eth0

valid_lft forever preferred_lft forever

inet 10.146.3.2/32 scope global eth0:1

valid_lft forever preferred_lft forever

inet6 fe80::4001:aff:fe92:31c/64 scope link

valid_lft forever preferred_lft forever

Recommended Reading

Controller Cluster IP

FAQ on Controller Cluster

Changing Avi Controller Cluster Configuration