Avi Controller Design for the Avi Vantage Platform

Logical Design of Avi Controller for the Avi Vantage Platform

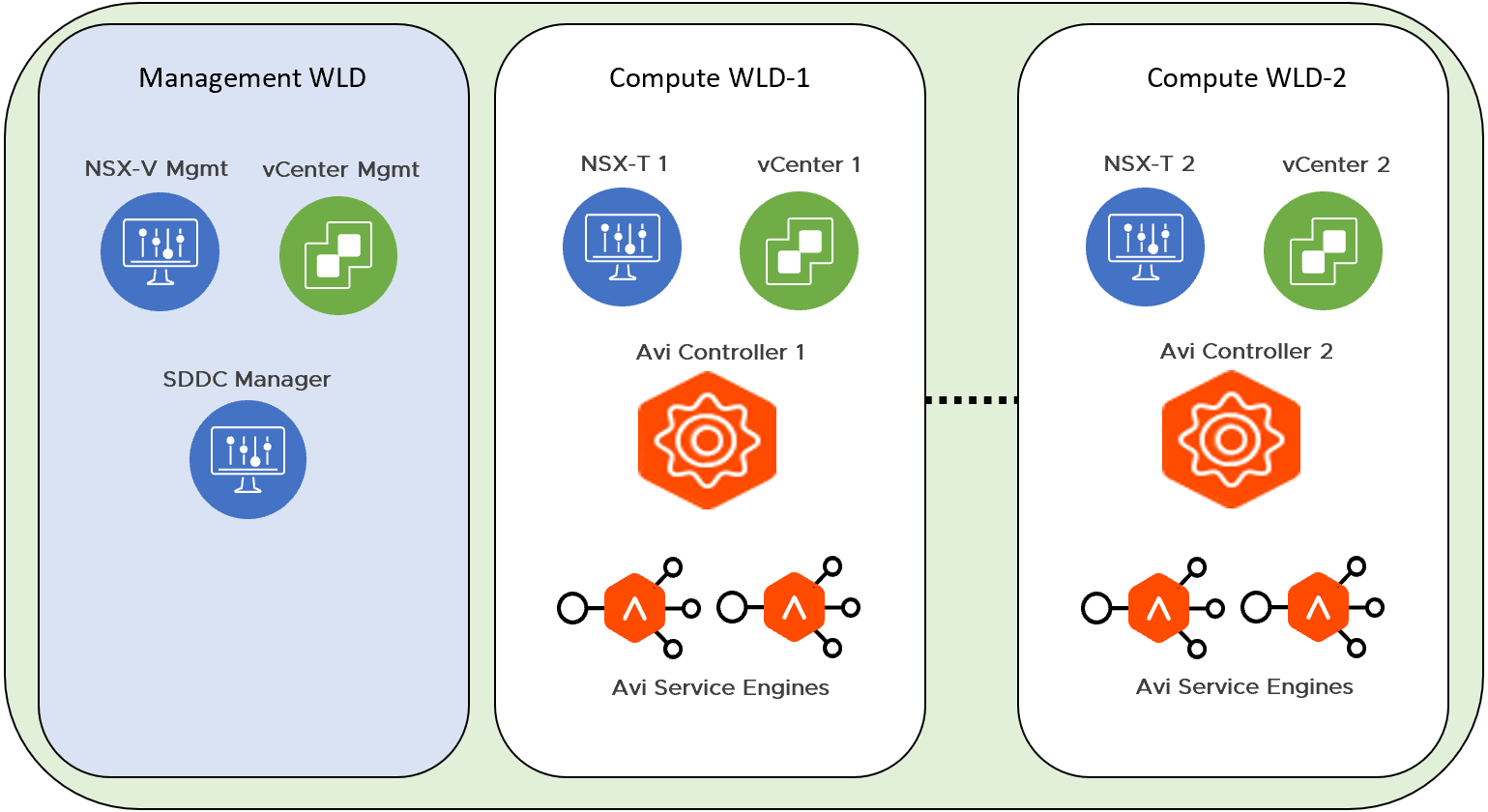

To provide integration with a VMware Cloud Foundation SDDC solution, the Avi Vantage Platform components are mapped to the specific workload domains.

Each VMware Cloud Foundation SDDC instance consists of a minimum of one Management Workload Domain and an optionally multiple compute (VI) Workload Domains

The Avi Controllers are VMs that provide the control plane functionality. Three Avi Controller VMs are necessary to form an Avi Controller cluster which is a requirement to onboard the Avi Vantage Platform. Avi Controller VMs are placed in the Management Domain within VMware Cloud Foundation. Each NSX-T deployment managing VI workload domains will require an independent Avi Controller cluster to be deployed. Avi Controller cluster will manage the Avi Service Engines deployed in the virtual infrastructure workload domains that the NSX-T manages and service the workloads that require load balancing.

Multiple Avi Controller clusters within a single VMware Cloud Foundation stack might be created in case multiple NSX-T instances are used to manage the VI workload domains.. Refer to How to size Avi Controllers and vSphere Cluster Design for the Avi Vantage Platform for details.

The following diagram provides an overview of a sample VCF deployment. This VCF deployment has two Compute Workload Domains, each managed by a separate NSX-T instance. One Avi Controller Cluster is deployed for each NSX-T instance. Avi Controller VMs will be deployed physically in the Management Workload Domain and will manage Avi Service Engines deployed in the respective Compute Workload Domains.

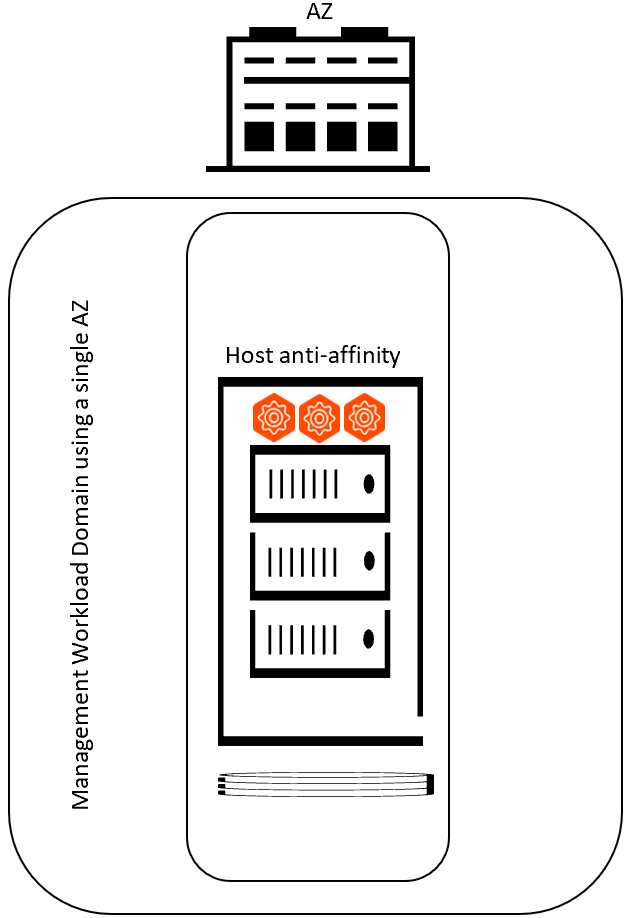

Deploying Avi Controllers in a Single Availability Zone Environment

A Single Availability Zone will have all physical servers that are part of the Workload Domain in a single physical location. Ensure that high availability of the Avi Controller cluster is maintained. Avi Controller cluster requires 2 out of 3 nodes to be up, in order for the control plane to continue regular function.

It is recommended that you deploy each of the 3 nodes of the Avi Controller cluster on different ESXi hosts and configure host anti-affinity rules for the Avi Controller VMs.

The following diagram shows Avi Controller Cluster deployment in a Single-AZ Cluster:

The following table summarizes the design decisions for deploying Avi Controllers for Avi Vantage Platform in a single availability zone:

| Decision ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-VI-VC-005 | Create anti-affinity 'VM/Host' rule that prevents collocation of Avi Controller VMs | vSphere will place the Avi Controller VMs in a manner to ensure maximum HA for the Avi Controller cluster (control plane) at all times | Avi Controller VMs might end up on the same ESXi host |

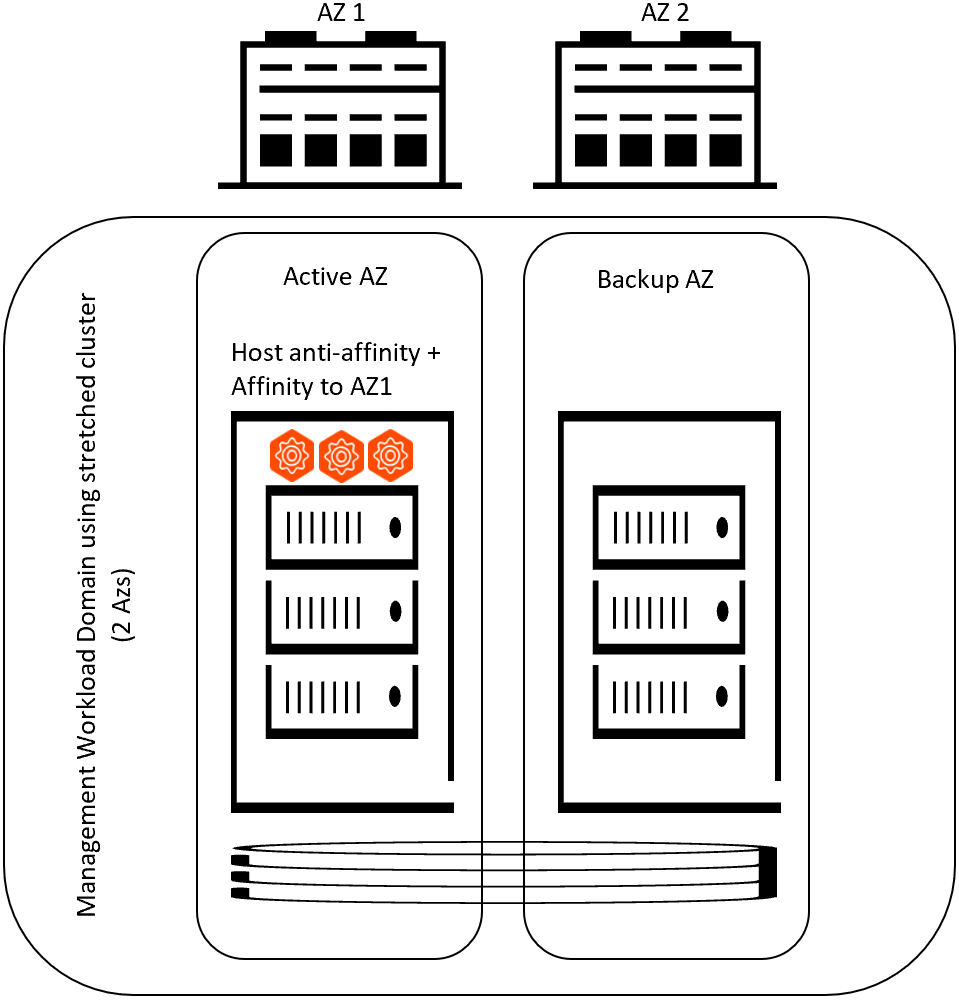

Deploying Avi Controllers in a Multi Availability Zone Environment

A Multi Availability Zone will have servers distributed between two physical locations and will be a part of a single Workload Domain vCenter cluster. Ensure that high availability of the Avi Controller cluster is maintained across the physical locations. Avi Controller cluster requires 2 out of 3 nodes to be up, in order for the control plane to continue regular function.

It is recommended that you deploy all 3 nodes of the Avi Controller cluster on ESXi hosts residing in the primary AZ. vSphere HA will recover the Avi control plane upon a DR event, and DRS will re-balance the Avi Controller placement onto the ESXi hosts in the primary AZ. Avi Controllers should also follow the requirements of a single AZ design.

The following diagram shows Avi Controller Cluster deployment in a Multi-AZ (Stretched) Cluster:

The following table summarizes the design decisions for deploying Avi Controllers for the Avi Vantage Platform in multi availability zone:

| Decision ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-VI-VC-006 | Distribute Avi Controllers on separate ESXi hosts in the Management domain | This allows for maximum fault tolerance | Reduced fault tolerance if multiple Avi Controllers are hosted on the same ESXi host |

| AVI-VI- VC-007 | Create anti-affinity 'VM/Host' rule that prevents collocation of Avi Controller VMs | vSphere will ensure placing the Avi Controller VMs in a manner that ensures maximum HA for the Avi Controller cluster (control plane) at all times | Avi Controller VMs might end up on the same ESXi host |

| AVI-VI- VC-008 | Create an affinity 'should' rule for Avi Controllers on servers in physical location 1 (AZ1) | This ensures Avi control plane is deployed in the ‘primary/active’ AZ. DR event will be handled by vSphere HA and when the primary AZ recovers; DRS will re-balance the placement of Avi Controllers onto the ‘primary/active’ AZ |

Avi control plane might cross active and backup AZs |

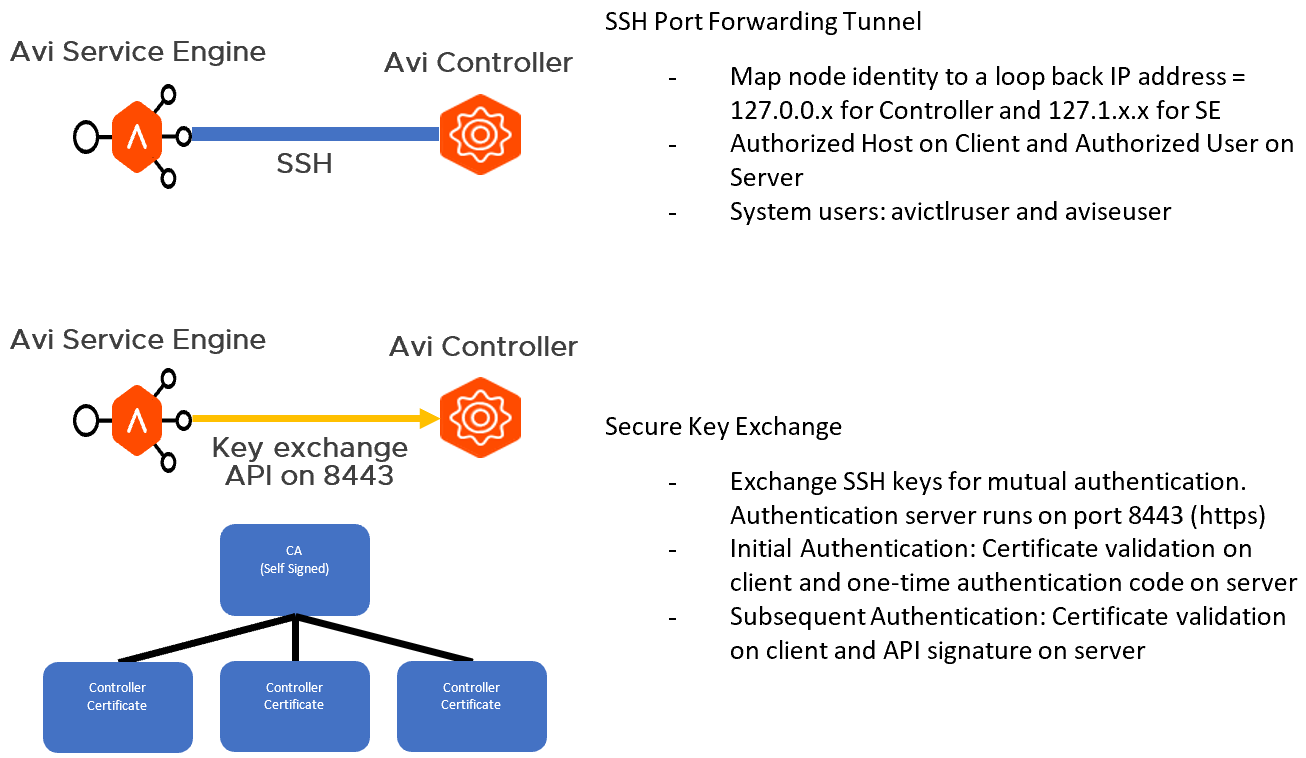

Avi Controller to Avi Service Engine Communication

Avi Controllers continually exchange information securely with Avi Service Engines (SEs). This section explains the process of establishing trust and securing the communication between the Avi Controller and Service Engines.

Points to Consider

- Do not replace or edit the

System-Default-Secure-Channel-Certwith a custom certificate. Create a new certificate and update the System configuration to point to it. Replacing the existing certificate will break the secure channel certificate verification and the system will have to be restored.

Establishing a Secure Channel

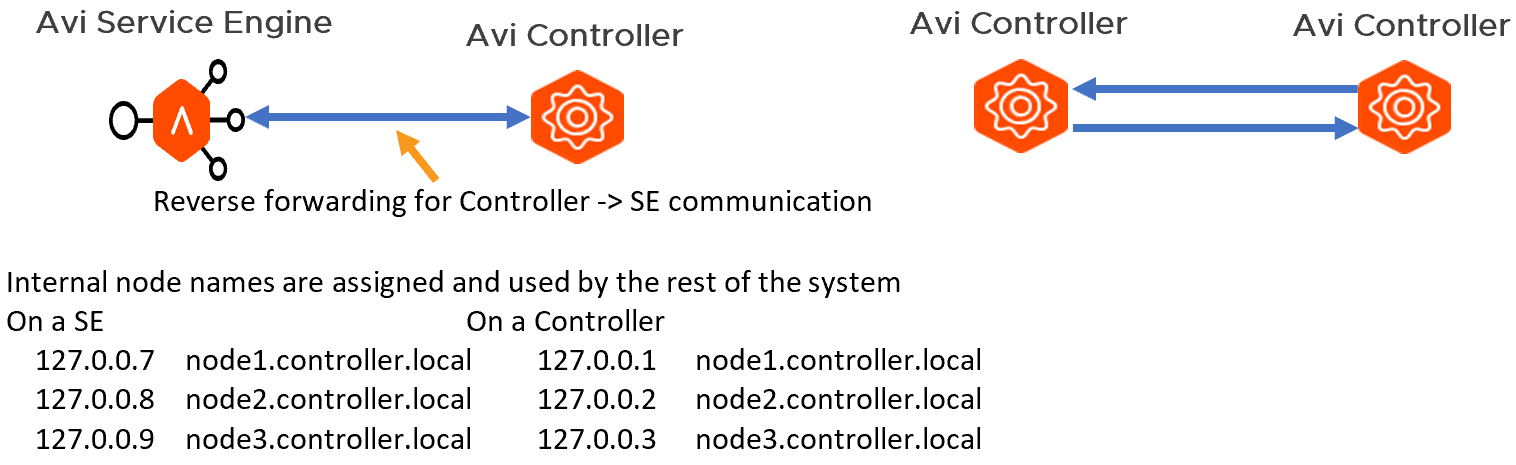

The Avi Controller exposes port 443 (API server), 22 (SSH), and 8443 (system portal specifically dedicated for secure key exchange). All services running inside the Controller listen to the local host. Any service to service communication between Controllers runs on top of SSH port-forwarding based secure tunnel. Internal loopback IP addresses and node names are allocated the Controller(s) and SE(s) for this secure connectivity. SSH forwarding from one Controller to another Controller uses host-key based authentication on the client-side, and an internal user, avictrluser, based on the server-side.

For exchanging the SSH host and the avictrluser keys securely, a securekeyexchange API is exposed on the system portal (8443).

The following diagram shows Avi Vantage platform secure-channel architecture:

The trust model here is based on a certificate hierarchy, where the Controller generates the following set of self-signed certificates:

- Root CA

- Controller certificate (signed by the root CA)

System portal API can be accessed only via HTTPS which presents the Controller certificate.

Authentication

Initial authentication is based on the following:

-

Exporting a credential bundle on the leader node which consists of the CA certificate and the one-time token valid for a duration of ten minutes.

-

Importing this credential bundle into the follower node that is being added to the existing cluster.

On invoking a secure key exchange API to exchange SSH keys, the authentication involves:

-

The client verifying the certificate on the HTTPS using the CA certificate

-

The server verifying usage of the one-time token that was generated

Subsequent authentication involves the server verifying an API signature key exchange using the SSH keys exchanged.

There is mutual authentication in exchanging SSH key and connectivity between the services is done using SSH port-forwarding and so no man-in-the-middle attack is possible.

While the default is a self-signed certificate, you can also update the certificates on port 8443 with something that is issued by your organization PKI.

Only a limited set of users are allowed via SSH. They are admin and other internal users such as avictlruser, aviseuser and the CLI user. avictlruser and aviseuser only support key based authentication. With this, we limit the exposure of the connectivity to the Controller and SE to minimize the surface area of attack.

Deployment Specification of Avi Controller for the Avi Vantage Platform

Deployment Model for Avi Controller for the Avi Vantage Platform

How to Size Avi Controllers

A 3-node Avi Controller deployment is a requirement for optimum operation of the Avi Vantage platform.

Avi Controller sizing guidelines for CPU and memory

The amount of CPU/memory capacity allocated to an Avi Controller is calculated based on the following parameters:

- The number of virtual services to support

- The number of Service Engines to support

- Analytics thresholds

| CPU/Memory Allocation | 8 CPUs / 24 GB | 16 CPUs / 32 GB | 24 CPUs / 48 GB |

|---|---|---|---|

| Virtual Service scale | 0-200 | 200-1000 | 1000-5000 |

| Avi Service Engine (SE) scale | 0-100 | 100-200 | 200-250 |

Avi Controller Sizing Guidelines for Disk

The amount of disk capacity allocated to an Avi Controller is calculated based on the following parameters :

- The amount of disk capacity required by analytics components

- The number of virtual services to support

| Disk Allocation based on virtual service count | Log analytics without full logs | Log analytics with full logs | Metrics | Base Processes | Total (without full logs) |

|---|---|---|---|---|---|

| 100 VS | 16 GB | 128 GB | 16 GB | 48 GB | 80 GB |

| 1,000 VS (100k transactions / year) | 128 GB | 1 TB | 32 GB | 56 GB | 216 GB |

| 5,000 VS | 512 GB | Not supported | 160 GB | 64 GB | 736 GB |

The following table summarizes the design decisions for sizing the Avi Controller for the Avi Vantage Platform:

| Decision ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-CTLR-003 | Deploy one Avi Controller cluster for each NSX-T domain for configuring and managing advanced load balancing services. |

Required to form a highly available Avi Controller Cluster Avi Vantage Platform LCM in future will be handled by the respective NSX-T instance |

Control plane will not have any HA capability LCM complexities may arise in future |

| AVI-CTLR-004 | Deploy each node in the Avi Controller cluster with a minimum of 8 vCPUs, 24GB Memory and 216 GB of disk space | Support up to 200 virtual services Support up to 100 Avi Service Engines Can scale-up with expansion of Avi Controller sizes anytime |

Under sizing Avi Controllers would lead to unstable control plane functionality |

Creating Avi Controller Cluster for Control Plane High Availability

Avi Vantage platform requires a 3-node Avi Controller cluster deployment for control plane high availability. Among the 3 nodes, a leader node is elected, which orchestrates the startup/shutdown process across all the active members of the cluster. Configuration and metrics databases are configured to be active on the leader node and placed in standby mode on the follower nodes. Streaming replication is configured to synchronize the active database to the standby databases. Analytics functionality is shared among all the nodes of the cluster. A given virtual service streams the logs and metrics to one of the nodes of the cluster.

Failover Scenarios

-

When the leader node goes down — One of the follower nodes is promoted as a leader. This triggers a warm restart of the processes among the remaining nodes in the Avi Controller cluster. The warm standby is required to promote the standby configuration and metrics databases from standby to active on the new leader.

Note: During warm restart, the Avi Controller REST API is unavailable for a period of 2-3 minutes. -

When one of the follower node goes down — Follower node is removed from the active member list and the work that was performed on this node is re-distributed among the remaining nodes in the Avi Controller cluster.

-

When two nodes go down — The entire Avi Controller cluster becomes non-operational until at least one of the nodes is brought up. A quorum (2 out of 3) of the nodes must be up for the Avi Controller Cluster to be operational.

-

How is data path impacted when Avi Controller Cluster is down — During the time the Avi Controller Cluster is non-operational, the Avi Service Engines continue to forward traffic for the configured virtual services (referred to as headless mode). During this time, SEs attempt to buffer client logs, providing sufficient disk space is available. When the Avi Controller cluster is once again operational, the buffered client logs become available for retrieval. Metric data points are not buffered on the SE during headless operation, however incremental metrics such as “bytes sent” continue to be accounted on the SE so that aggregate information remains correct once metric collection by the Controller resumes. Data plane traffic continues to flow normally throughout this time.

The following table summarizes the design decisions for deploying an Avi Controller cluster

| Decision ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-VI-VC-007 | Reserve CPU and Memory | This will give Avi Controllers guaranteed resources to run on. This is a recommended setting. | Avi Controllers might be starved from process scheduling leading to the control plane going down. |

| AVI-VI-VC-008 | Do not over provision vCPUs | This will allow Avi Controllers dedicated vCPUs to schedule workloads. This setting is not required if the CPU has been reserved. | Avi Controllers might be starved from process scheduling leading to the control plane going down. |

| AVI-VI-VC-009 | Apply VM-VM anti-affinity rules in vSphere Distributed Resource Scheduler (vSphere DRS) to the Avi Controller VMs. | This will ensure host level fault tolerance. | Control plane might go down if an ESXi host goes down hosting multiple Avi Controller VMs. |

| AVI-CTLR- 005 | Initial setup should be done only on one Avi Controller VM out of thethree deployed to create an Avi Controller Cluster. | Avi Controller Cluster is created from an initialized Avi Controller which becomes the cluster leader. Follower Avi Controller nodes need to uninitialized in order to join the cluster. | Avi Controller Cluster creation will fail if more than one Avi Controller is initialized. |

| AVI-VI-VC-010 | In vSphere HA, set the restart priority policy for each Avi Controller VM to high. | Avi Controller implements the control plane for virtual network services. vSphere HA restarts the Avi Controller VMs first so that other virtual machines that are being powered on or migrated by using vSphere vMotion while the control plane is offline lose connectivity only until the control plane quorum is re-established. | If the restart priority for another management appliance is set to highest, the connectivity delays for management appliances will be longer. |

| AVI-VI-VC-011 | When using two availability zones, create a virtual machine group for the Avi Controller VMs. | Ensures that the Avi Controller VMs can be managed as a group. | You must add virtual machines to the allocated groups manually. |

| AVI-VI-VC-012 | When using two availability zones, create a should-run VM-Host affinity rule to run all 3 nodes of Avi Controller Cluster on the group of hosts in Availability Zone 1 (Primary). | Ensures that all Avi Controller VMs are located in the primary Availability Zone. | Avi Controller VM placement will be indeterministic. |

Tenants in the Avi Vantage Platform

A tenant manages an isolated group of resources in Avi Vantage. Avi Vantage platform supports the following forms of tenancy:

- Control plane isolation only — Policies and application configuration are isolated across tenants. Applications are provisioned on a common set of Avi Service Engine entities.

- Control + Data plane isolation — Policies and applications configuration are isolated across tenants. Applications are provisioned on isolated set of Avi Service Engine entities. This enables full tenancy.

Each user account on Avi Vantage is associated with one or more tenants. The tenant associated with a user account defines the resources that the user can access within Avi Vantage. When a user logs in, Avi Vantage restricts their access to only those resources that are in the same tenant.

It is recommended to create a non-VCF tenant to onboard applications outside of VCF for load-balancing. In addition, every workload domain by default will be scoped to a tenant on Avi.

You can also choose to map users with defined roles to the created tenants.

The following table summarizes design decisions for creating a tenant on the Avi Vantage Platform:

| Decision ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-CTLR-006 | Create a non-VCF tenant | This will allow to onboard load-balanced applications which are outside the scope of VCF. For instance, any physical infrastructure components that need load balancing | Tenancy could be setup on Avi Controller at any point in time. Creating a non-VCF tenant on day-0 can make on-boarding non-VCF workload seamless |

Life Cycle Management for Avi Controller for the Avi Vantage Platform

The Avi Vantage upgrade process allows for updating or applying patches to the entire Avi Vantage platform at one time, or specifying specific components. By being able to upgrade a select set of components, patches can be applied to the components that need them without impacting any other.

The two update types available are:

- Upgrade — New software and maintenance releases are delivered through upgrade packages

- Patch — Hot-fixes delivered through patch packages

Updates to the Avi Vantage platform are possible for the following scenarios:

-

System wide upgrade or patch — Avi Controllers and all Avi Service Engines across all SE groups will be upgraded/patched.

-

Per SE group basis upgrade or patch — Only a set of SE groups, thereby only the Avi Service Engines contained within, will be upgraded/patched. This is especially useful for multi-tenant deployments.

-

UI only patch — Deliver UI hot-fixes through a patch.

-

Controller only upgrade or patch — Only the Avi Controllers will be upgraded or patched.

Notes:

-

An upgrade image along with a patch can be applied simultaneously for making upgrade seamless.

-

Rollback to the previous versions of Avi Vantage is supported for both upgrades and patches.

Benefits of the Flexible Upgrade Scheme

-

Supports scenarios where it is not possible to upgrade all SE groups to the newer version at the same time due to restrictions such as logistics, untested reliability on the new software, etc.

- Using SE groups for data plane separation. Based upon the SE group segmentation, the upgrade is performed based upon the following attributes.

- Tenant

- Application or product offering

- Production, pre-production and development environments

- Cloud or environment (AWS, VMware, etc.)

- Patches to only applications or SE groups that need them

- Flexible scheduling

- Self-service upgrades

The following table summarizes the design decisions for the lifecycle management of Avi Vantage Platform

| Decision ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-CTLR-007 | Use the Avi Controller to perform the life cycle management of Avi Vantage platform | Because the deployment scope covers all components of the Avi Vantage platform, Avi Controller performs patching, and upgrade of components in as a single process | The operations team must understand and be aware of the impact of a patch, and upgrade operations by using the Avi Controller |

Alerting and Events Design for Avi Controller for the Avi Vantage Platform

The following table summarizes the design decisions for setting up alert destinations for Avi Controller cluster:

| Design ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-CTLR-008 | Specify external system(s) for the Avi Controller to send events on email/SNMP/syslog | Useful for organizations to centrally monitor the Avi Controller cluster | For operations teams to be able to centrally monitor Avi Vantage and escalate alerts events must be sent from the Avi Controller |

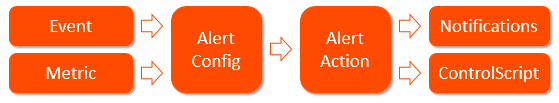

In its most generic form, alerts are a construct intended to inform administrators of significant events within Avi Vantage. In addition to triggering based on system events, alerts may also be triggered based upon one or more of the 500+ metrics Avi Vantage tracks. Once an alert is triggered, it may be used to notify an administrator through one or more of the notification actions. Or to take things a step further, alerts may trigger ControlScripts, which provide limitless scripting possibilities.

Alert Workflow

Configuring custom alerts requires following the workflow to build the alert config (the alert’s trigger) based on one or more of 500+ events and metrics. When the alert configuration’s conditions are met, Avi Vantage processes an alert by referring to the associated alert action, which lists the notifications to be generated and potentially calls for further action, such as application autoscaling or execution of a ControlScript. The sections below provide a brief description of each component that plays a role in alerts.

Events

Events are predefined objects within Avi Vantage that may be triggered in various ways. For instance, an event is generated when a user account is created, when a user logs in, or when a user fails the login password check. Similar events are generated for server down, health score change, etc.

Metrics

Metrics are raw data points, which may be used for generating an alert. A metric may be something simple, such as the point in time a client sends a SYN to connect to a virtual service. An alert may be triggered though if the client sends more than 500 SYNs within a 5 minute window. With this example, an alert is triggered if the metric occurs more than X times in Y time-frame.

Alert Config

The Alert Configs are used to define the triggers that will generate an alert. Avi Vantage comes with a number of canned alert configs, which are used to generate alerts in a default Avi Vantage deployment. These alert configs may be modified, but not deleted.

To create a new alert config, provide the basics, such as the config name and the trigger condition, which will be an event or a metric. Then choose an alert action to be executed when the alert config conditions are met.

Alert Action

The Alert Actions are called by an alert config when an alert has been triggered. This reusable object defines the result for the triggering alert. Alert actions may be used to define the alert level, and to notify administrators via the Avi admin console (default), email, syslog or SNMP traps. The alert action may trigger a ControlScript or trigger an autoscale policy to scale the application in or out, and at what level (SEs in the SE group, members of a server pool).

Notifications

Alerts can be sent to four notification destinations. The first is the admin console. These notifications show up as colored bell icons indicating that an alert has occurred. The other three notifications, email, syslog, and SNMP v2c traps can all be configured as notification destinations.

ControlScript

ControlScripts are Python-based scripts, executed from the Avi Controller. They may be used to alter the Avi Vantage configuration or communicate to remote systems (such as email, syslog, and/or SNMP servers). For instance, if an IP address is detected to be sending a SYN flood attack, the alert action could notify administrators by email and also invoke a ControlScript to add the offending client to a blacklist IP group attached to a network security policy that is rate shaping or blocking attackers.

Monitoring and Alerting Design for Avi Controller for the Avi Vantage Platform

Avi Controllers can send system and user defined alerts via one or more of the following mechanisms:

- Syslog

- SNMP

- Control script

The following table summarizes the design decisions for monitoring and alerting of the Avi Vantage platform

| Decision ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-CTLR-009 | Choose one or more types of notification for monitoring/alerting | Avi supports Syslog, Email, SNMP, ControlScripts to enable a wide array of monitoring/alerting. Utilizing this efficiently will ensure good health of the Avi Controller cluster | Health degradation of the Avi Controller cluster might go unnoticed |

Syslog Notifications

Syslog messages may be sent to one or more syslog servers. Communication is non-encrypted via UDP, using a customizable port. According to RFC 5426, syslog receivers must support accepting syslog datagrams on the well-known port 514 (Avi Vantage’s default), but can be configured to listen on a different port. The alert action determines which log levels (high, medium, low) should be sent. Avi Vantage uses this process internally for receiving logs.

Notes:

-

In an Avi Controller cluster, only the leader node sends the syslog notifications.

- The IP address of the syslog traffic will be the interface IP of the Avi Controller cluster leader node.

- Notifications are sent in clear text format.

Alert Actions may be configured to send alerts to administrators via email. These emails could be sent directly to administrators or to reporting systems that accept email. Either option requires the Vantage Controller to have a valid DNS and default gateway configured so it can resolve the destination and properly forward the messages.

SNMP Trap

Alerts may be sent via SNMP Traps using SNMP v2 or v3.

- Edit — Opens the Create/Edit SNMP Trap popup.

- Delete — Remove the selected SNMP Trap server.

ControlScript

Used to send alerts to a third-party solution such as PagerDuty, Slack. Implemented through any scripting language such as bash or python. The script will be stored and executed on the Avi Controller.

Data Protection and Back Up for Avi Controller for the Avi Vantage Platform

The following table summarizes the design decisions for data protection and backup for Avi Controller:

| Design ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-CTLR-010 | External host for Avi to SCP configuration backups | The Avi Controller will SCP the configuration to a remote server. It is a best practice to have copies of the configuration in case the Avi cluster needs to be recreated or recovered. | Remote host storage management |

| AVI-CTLR-011 | Backup configuration frequency | Environments with a large amount of configuration changes could require backups every hour as opposed to potentially every day. | Configuation state of the cluster might be lost. |

Backup and Restore Avi Vantage Configuration

Periodic backup of the Avi Controller configuration database is recommended. This database defines all clouds, all virtual services, all users, and so on. Any user capable of logging into the admin tenant is authorized to perform a backup of the entire configuration, i.e., of all tenants. A restore operation spans all the same entities, but can only be performed by the administrator(s) capable of logging into one of the Avi Controllers using SSH or SCP.

It is a best practice to store backups in a safe, external location, in an unlikely event that a disaster destroys the entire Avi Controller (or cluster), with no possibility of remediation. Based on how often the configuration changes, a recommended backup schedule could be daily or even hourly.

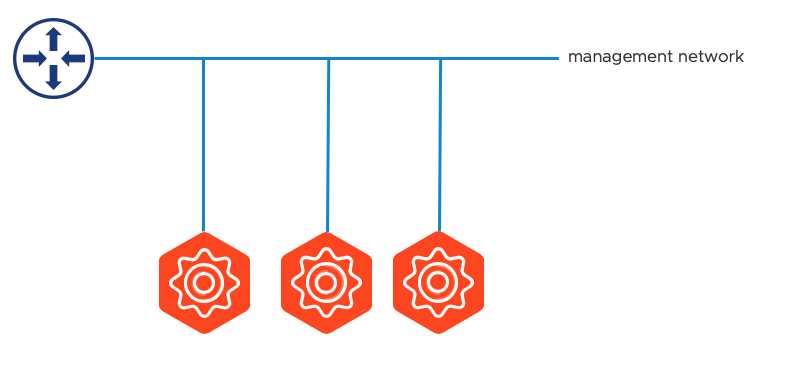

Networking Design of Avi Controller for the Avi Vantage Platform

The Avi Controllers require an interface to be used for both admin access as well as for connectivity to/from Avi Service Engines and potential cloud integrations. If a floating cluster VIP is desired, all three Avi Controllers for the cluster must be within the same management network.

For additional information port/protocol requirements use Networking Design for the Avi Vantage Platform.

The following table summarizes the design decisions for the networking design of Avi Controller:

| Design ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-VI-002 | Configure DNS A records for the three Avi Controllers and cluster VIP | The Avi Controllers is accessible by an easy to remember fully qualified domain name as well as directly by an IP address | DNS records for the Avi Controllers must be created |

| AVI-VI-003 | Configure time synchronization by using an internal NTP time for the Avi Controller |

|

|

The following diagram shows Avi Controllers connected to the management network:

Information Security and Access Design of Avi Controller for the Avi Vantage Platform

Identity Management Design of Avi Controller for the Avi Vantage Platform

Avi Networks Controller offers multiple options for integrating the management console into enterprise environments for authentication management, such as LDAP, SSO.

Avi Controller Clusters would be setup with a local admin account and optionally SAML SSO integration can be provided outside of VCF.

Service Accounts Design of Avi Controller for the Avi Vantage Platform

Each Avi Vantage user account is associated with a role. The role defines the type of access the user has to each area of the Avi Vantage system.

Roles provide granular Role-Based Access Control (RBAC) within Avi Vantage.

The role, in combination with the tenant (optional), comprises the authorization settings for an Avi Vantage user.

Access Types

Role permissions can be one of the following:

-

Write — User has full access to create, read, modify, and delete items. For instance, the user may be able to create a virtual service, modify its properties, view its health and metrics, and later delete that virtual service.

-

Read — User may only read the existing configuration of the item. For instance, the user may see how a virtual service is configured while being unable to view the current metrics, modify, or delete that virtual service.

-

No Access — User can neither see nor modify this section of Avi Vantage. For instance, the user will be prohibited from creating, modifying, deleting, or even viewing (reading) any virtual services at all.

Pre-defined Avi Vantage User Roles

Avi Vantage comes with the following pre-defined roles:

-

Application-Admin — User has write access to the Application and Profiles sections of Avi Vantage, read access to the Infrastructure settings, and no access to the Account or System sections.

- Application-Operator — User has read access to the Application and Profiles sections of Avi Vantage, and no access to the Infrastructure, Account, and System sections.

-

Security-Admin — User has read/write access only to the Templates > Security section.

-

System-Admin — User has write access to all sections of Avi Vantage.

-

Tenant-Admin — User has write access to all sections of Avi Vantage except the System section, to which the user has no access.

- WAF-Admin — User has write access to WAF Profiles and Policies, read access to application VSs, pools and pool groups, read access to clouds, and no access to the rest.

Each user must be associated with at least one role. The role can be either predefined or custom.

If multi-tenancy is configured, a user can be assigned to more than one tenant, and can have a separate role for each tenant.

| Design ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-CTLR-012 | Create custom Roles only if required | Pre created roles on Avi Controllers could be used for most cases | A role might need to be created if a user-account needs to have specific permissions that are not available out of the box on the Avi Controllers |

Password Management Design of Avi Controller for the Avi Vantage Platform

Passwords on the Avi Vantage platform are managed by the Avi Controller cluster. Avi Service Engines inherit the required user accounts and passwords from the Avi Controllers.

The initial default password of the admin user of the Avi Controller is a strong password. This password is available in the Avi Networks portal where release images are uploaded, accessible only to customers having an account on the portal. Additionally, SSH access to the Controller with this default password is not allowed until the user changes the default password of the admin user. Once the password is changed, SSH access to the admin user will be permitted. The primary reason for this change is to mitigate the risk of having a newly created Controller being attacked via SSH. The admin password can be changed at the Welcome screen in Avi UI, Avi REST API or via a remote CLI shell.

The default deployment of Avi Vantage creates an admin account for access to the system. This initial account has strong password enforcement enabled by default. Additional user accounts can be created, either local username/password, or remote accounts, which are tied into an external auth system such as LDAP.

The following table summarizes the design decisions for the password management design of Avi Controller:

| Design ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-CTLR-013 | Create a strong password for the Avi Vantage platform

|

This reduces the risk of the account being compromised. This is a requirement to setup user accounts, including the admin account. |

Avi Controllers might be compromised if the user account passwords are compromised |

| AVI-CTLR-014 | Rotate passwords at least every 3 months | This will ensure security of the user accounts | Avi Controllers might be compromised if the user account passwords are compromised |

Certificate Management Design of Avi Controller for the Avi Vantage Platform

Avi Controllers package system default certificates are used for the following:

-

Portal (UI) — This certificate is presented by the Avi Controller for user sessions over WebUI or the REST API.

-

Secure channel — This certificate is used by the Controllers and Service Engines to establish a secure-channel.

You can optionally choose to use your own certificate.

The following table summarizes the design decisions for the Certificate Management Design of Avi Controller:

| Design ID | Design Decision | Decision Justification | Design Implication |

|---|---|---|---|

| AVI-CTLR-015 | Create an SSL certificate from a trusted Certificate Authority to be used for the Portal. (This is optional |

TLS encryption is used to protect traffic to/from the Avi Admin Console, by default a self-signed certificate which raises security warnings in browsers | SSL certificate needs to be created and renewed when expires |

| AVI-CTLR-016 | Create an SSL certificate from a trusted Certificate Authority to be used for the secure channel between the Avi Controller and Avi Service Engines. (This is optional) |

Customers can choose to do this for compliance reasons | SSL certificate needs to be created and renewed when expires |