Service Discovery Definition

Service discovery is the process of automatically detecting devices and services on a network. Service discovery protocol (SDP) is a networking standard that accomplishes detection of networks by identifying resources. Traditionally, service discovery helps reduce configuration efforts by users who are presented with compatible resources, such as a bluetooth-enabled printer or server.

More recently, the concept has been extended to network or distributed container resources as ‘services’, which are discovered and accessed.

What is Service Discovery?

Service Discovery has the ability to locate a network automatically making it so that there is no need for a long configuration set up process. Service discovery works by devices connecting through a common language on the network allowing devices or services to connect without any manual intervention. (i.e Kubernetes service discovery, AWS service discovery)

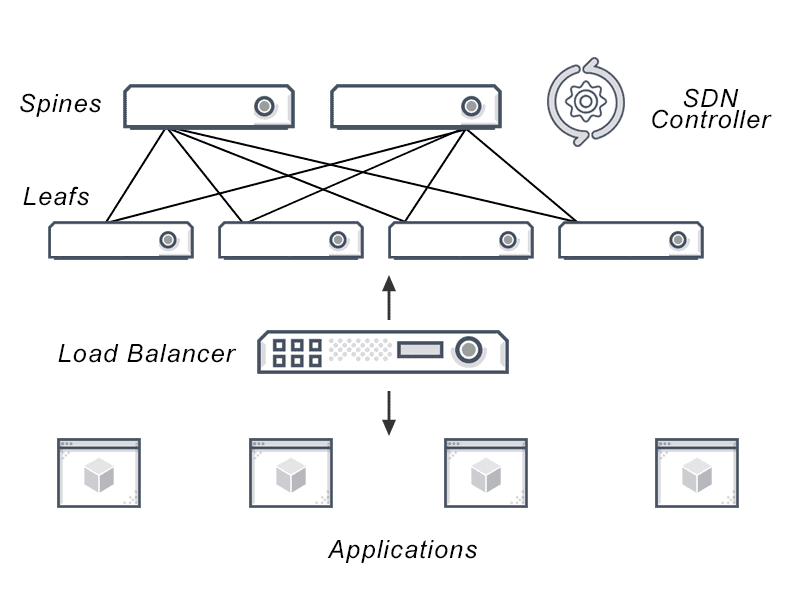

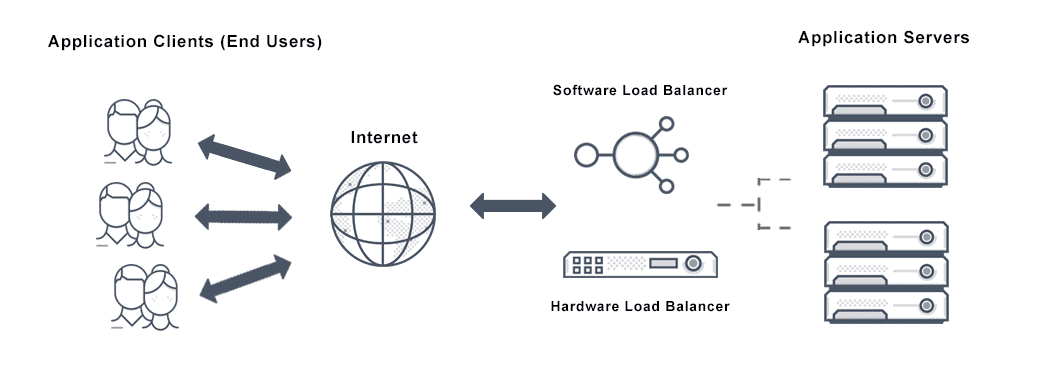

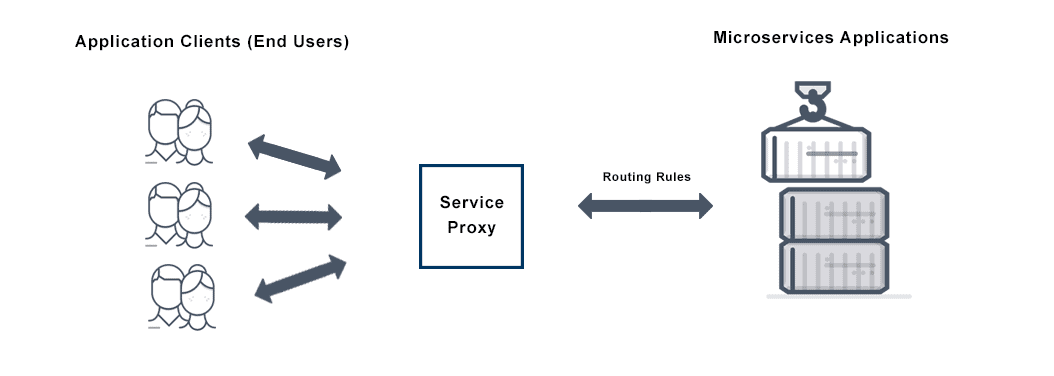

There are two types of service discovery: Server-side and Client-side. Server-side service discovery allows clients applications to find services through a router or a load balancer. Client-side service discovery allows clients applications to find services by looking through or querying a service registry, in which service instances and endpoints are all within the service registry.

How does Service Discovery Work?

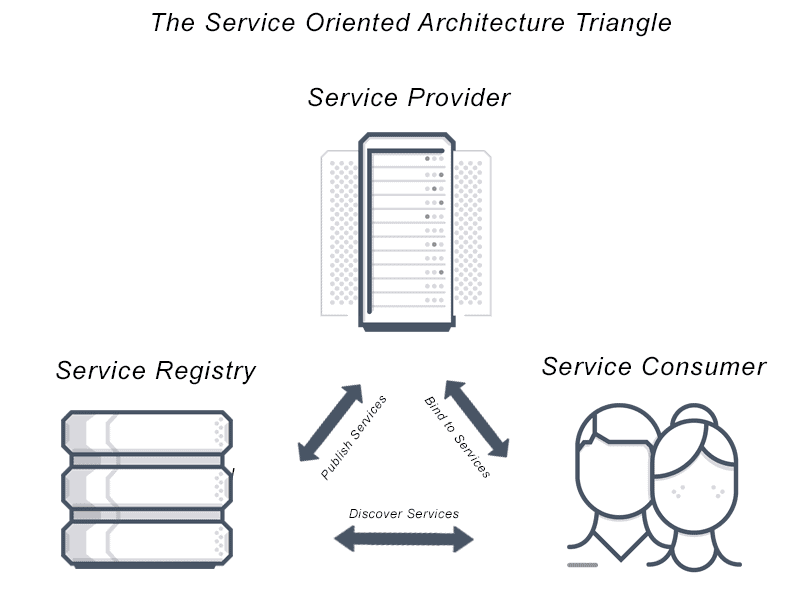

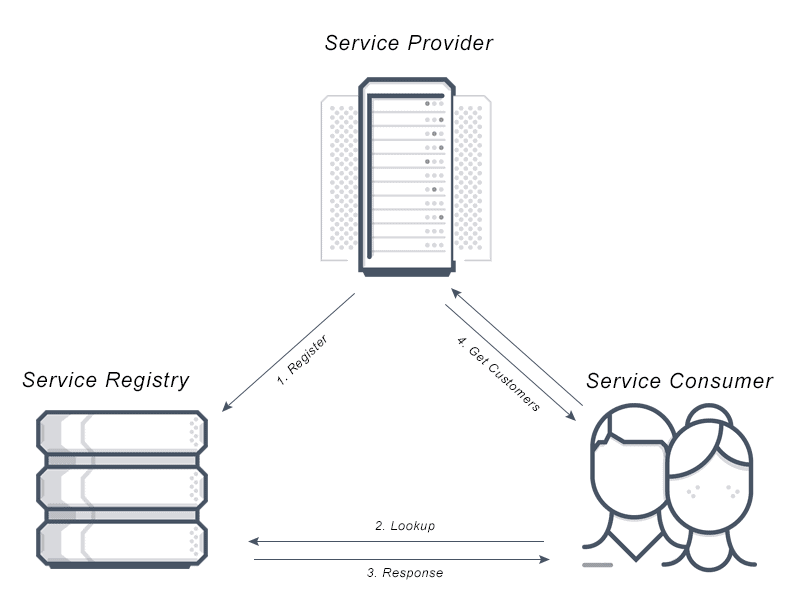

There are three components to Service Discovery: the service provider, the service consumer and the service registry.

1) The Service Provider registers itself with the service registry when it enters the system and de-registers itself when it leaves the system.

2) The Service Consumer gets the location of a provider from the service registry, and then connects it to the service provider.

3) The Service Registry is a database that contains the network locations of service instances. The service registry needs to be highly available and up to date so clients can go through network locations obtained from the service registry. A service registry consists of a cluster of servers that use a replication protocol to maintain consistency.

What is Service Discovery in Microservices?

Microservices service discovery is a way for applications and microservices to locate each other on a network. Service discovery implementations within microservices architecture discovery includes both:

• a central server (or servers) that maintain a global view of addresses.

• clients that connect to the central server to update and retrieve addresses.

What are the Advantages of Service Discovery (Server-side & Client-side)?

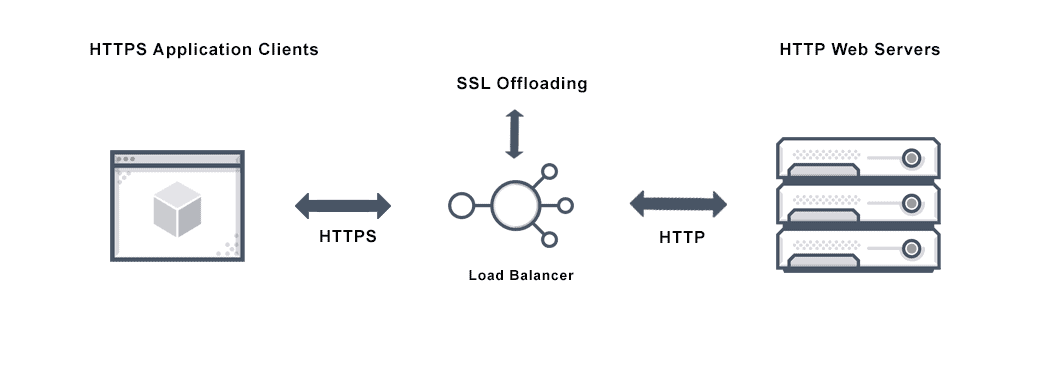

The advantage of Server-side service discovery is that it makes the client application lighter as it does not have to deal with the lookup procedure and makes a request for services to the router.

The advantage of Client-side service discovery is that the client application does not have to traffic through a router or a load balancer and therefore can avoid that extra hop.

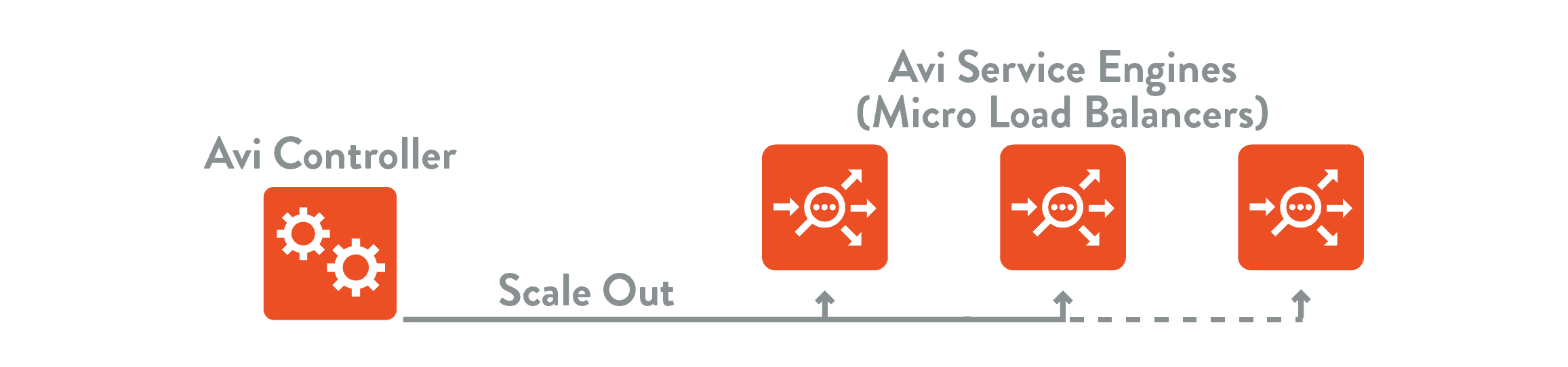

Does Avi Offer Service Discovery?

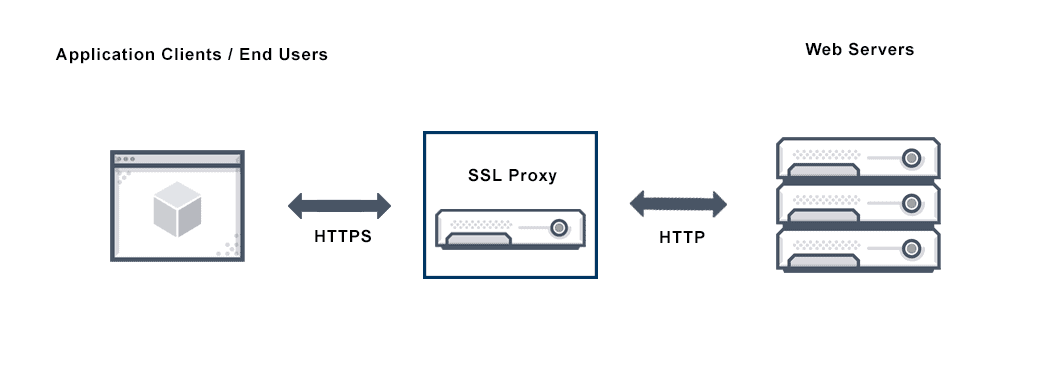

Yes, Avi offers service discovery which automatically maps service host/domain names to their Virtual IP addresses where they can be accessed and presents in a visual and dynamic “application map”. Service discovery bridges the gap between a service’s name and access information (IP address) by providing a dynamic mapping between a service name and its IP address. Users of all services (users using browsers or apps or other services) use well-known DNS mechanisms to obtain service IP addresses. The service discovery database must be kept up-to-date with this mapping as services are created and destroyed. The “global state” (available service IP addresses) of the application across sites and regions also resides in the service discovery database and is accessible by DNS.

For more on the actual implementation of load balancing, security applications and web application firewalls check out our Application Delivery How-To Videos.

For more information on service discovery see the following resources: