HTTP Compression Definition

HTTP compression can increase website performance. Size reduction of up to 70 percent depending on the document reduces bandwidth capacity needs. Over time, servers and clients support new algorithms and existing algorithms become more efficient.

Practically speaking, both servers and browsers have HTTP data compression methods implemented already, so web developers need not implement them specifically beyond a need to enable HTTP compression web configuration standards.

There are three different levels of HTTP compression:

- Specific optimized methods compress some file formats;

- The system encrypts and transmits the resource compressed from end to end at the HTTP level;

- The system can define compression between two nodes at the HTTP connection level.

File format compression

Each type of data has some inherent wasted space or redundancy. Typical redundancy rates for text hovers at as much as 60 percent, with much higher rates for video, audio, and other media types. Unlike with text, the need to regain space and optimize storage became apparent very early on with these various kinds of media that use extensive amounts of storage space for data. For this very reason, engineers designed the optimized compression algorithm that file formats use.

There are two basic kinds of compression algorithms for files, lossless and lossy:

- The lossless compression-uncompression cycle matches with the original byte to byte and doesn’t alter recovered data. PNG and GIF images use lossless compression.

- The lossy compression cycle alters the original data for the user in a way that is ideally imperceptible. The JPEG and video formats online are lossy.

- Some formats, like WebP, can be used for both lossless and lossy compression. Lossy algorithms can typically be configured for higher or lower quality as they compress more or less. For ideal site performance, balance maximum compression with desired quality levels.

Lossy compression algorithms tend to be more efficient than lossless algorithms.

End-to-end compression

End-to-end compression yields the largest performance improvements for sites and describes server compression of the body of a message that lasts unchanged until the message reaches the client. Intermediate nodes of all kinds leave the message body unaltered. All modern servers and browsers support end-to-end compression so the only issue is which compression algorithm optimized for text the organization wants to use.

Hop-by-hop compression

Though similar to end-to-end compression, hop-by-hop compression differs in one fundamental way: the compression takes place between any two nodes on the path between the client and the server on the body of the message, not on the resource in the server, so it doesn’t result in a specific, transmissible representation in the same way. Successive connections between intermediate nodes might apply different compressions. In practice, hop-by-hop compression is rarely used, in part because it is transparent for both client and server.

What is HTTP Compression?

It is possible to improve the web performance of any network-based application by reducing the server to client data transfer time. There are two ways to improve page load times:

- Reducing how often the server has to use the cache control and send data;

- Using HTTP compression to reduce the size of transferred data.

Just like it sounds, before a file is transmitted to a client, HTTP compression squeezes its size on the server, reducing page load times and making file transfers faster. There are two major categories of compression: lossless and lossy.

Lossy compression creates a data surrogate, so reversing the process with lossy decompression does not permit retrieval of the original data. Depending on the compression algorithm quality, the surrogate resembles the original more or less closely. In browsers, this technique is primarily used with the JPEG image, MPEG video, and MP3 audio file formats which are all more forgiving of dropped details in terms of what humans perceive. The resulting data size savings is significant.

In contrast, lossless decompression results in an identical, byte-for-byte copy of the original data. Many web file formats throughout the web platform use lossless compression, internally and in the HTTP layer for texts. Some relevant file formats for lossless compression include GIF, PDF, PNG, SWF, and WOFF.

To improve web performance, activate compression for all files except those that are already compressed. Attempting to activate HTTP compression for already compressed files like GIF or ZIP can be unproductive, wasting server time, and can even increase the size of the response message.

Both client and server must communicate over the support and usage of any compression format(s) to properly decompress files. The most commonly used HTTP body compression formats are Brotli (br), Gzip, and DEFLATE, but we will discuss more formats and algorithms below.

The client clarifies which content encoding it can understand in the Accept-Encoding header or Accept-Encoding request HTTP compression header. The server responds with the Content-Encoding response HTTP header, which indicates which compression algorithm was used.

Those steps covered content compression for files in the body of an HTML file, but did not address the actual HTTP header. Yet reducing the size of the HTTP header can greatly impact performance, since it is sent with every response.

To sum up, you can improve performance by systematically compressing uncompressed content like text. You can follow up by using HTTP/2 and its tool for secure HTTP header compression where possible. Finally, to guarantee optimal user experience and optimize digital services delivery, monitor how web servers, CDNs, and other third party service providers deliver content.

Tips and Best HTTP Compression Practices

Here are some tips and best practices surrounding HTTP compression.

Content Encoding Gzip vs DEFLATE

It is easy to discuss HTTP compression as if it is a monolithic feature, but actually, HTTP defines how a web client and server can agree to use a compression scheme in the Accept-Encoding headers and Content-Encoding headers to transmit content. The most commonly used HTTP body compression formats are Brotli (br), Gzip, and DEFLATE, with DEFLATE and Gzip mostly dominating the scene.

The patent-free DEFLATE algorithm for lossless data compression combines Huffman encoding and the LZ77 algorithm and is specified in RFC 1951. DEFLATE is easy to implement and compresses many data types effectively.

There are numerous open source implementations of DEFLATE, with zlib being the standard implementation library most people use. Zlib provides:

- data compression and decompression functions using DEFLATE/INFLATE

- the zlib data format which wraps DEFLATE compressed data with a checksum and header

Another compression library that uses DEFLATE to compress data is Gzip. More accurately, most Gzip compression implementations use the zlib library internally to DEFLATE or INFLATE. This results in a unique Gzip data format, also named Gzip, which wraps DEFLATE compressed data with a checksum and header.

Due to early problems with DEFLATE, some users prefer to only use Gzip.

HPACK

Typically, HTTP headers are not compressed, as HTTP content-encoding based compression applies to the response body only. This leaves a significant weakness in a HTTP compression plan. Along these lines, it is unusual for content-encoding to affect request bodies.

HTTP 2 header compression was introduced with the HTTP/2 protocol in the form of HPACK. HPACK was designed to resist attacks preying on the SPDY protocol such as the CRIME exploit, but it is limited in that it only works efficiently where the header doesn’t change from message to message. Before HPACK, attempts at header compression used Gzip, but this kind of implementation introduced security breaches that made it vulnerable to attacks. HTTP/2 draft 14 enables HPACK, but it’s often best to use content-encoding since draft 12 removed per-frame Gzip compression for data.

Compressing Request Bodies

Although HTTP allows clients to use the same content-encoding mechanism to compress request bodies they use for HTTP responses, in practice, this feature is mostly unused by browsers and used only rarely by other types of HTTP clients. There are a few reasons for this.

Most importantly, a client has no way of knowing whether a server accepts compressed requests, and many do not. In contrast, a server can examine the accept-encoding request header to determine whether a client accepts the compressed version of responses.

As a result, HTML forms do not yet allow authors to specify to compress request bodies.

Modern web applications can use script in browser APIs as a workaround:

- Load a file using HTML5 File API for upload into JavaScript

- Use a script-based compression engine to compress the file

- Upload the compressed file to the server

Compression Bombs

Servers may be wary of supporting compressed uploads due to “compression bombs.” This just refers to the peak compression ratio of the DEFLATE algorithm which approaches 1032 to 1. This means a one megabyte upload can rapidly become a 1 gigabyte explosion for a server. These attacks are most potent against servers, which require a single CPU to simultaneously serve thousands of users, compared to client applications like browsers.

Just like any other malicious activity, protecting against these kinds of crafted compression streams demands careful planning and additional diligence during implementation.

Best Practice: Minify, then Compress

Minification is the removal of all unnecessary characters from the source code without changing its functionality. Minifying your scripts before compression is a critical first step, because after decompression, the files still use whatever original memory they require. This also applies to image file types. Minification saves memory on the client, on memory-constrained mobile devices, and the system.

Challenges and Benefits of HTTP Compression

HTTP compression is a relatively simple feature of HTTP, yet there are several challenges to address to ensure its proper use:

- Compressing only compressible content

- Selecting the correct compression scheme for your visitors

- Properly configuring the web server to send compressed content to capable clients

- Maintaining HTTP compression security

Compressing Only Compressible Content

Enable HTTP response compression only for content that is not already natively compressed. This is not limited to text resources, such as CSS, HTML, and JavaScript, although because they are not natively compressed file formats, they certainly should be compressed. But a focus solely on these 3 types of files is short-sighted.

Other common web text resource types that should be subject to HTTP compression include:

- HTML CSS components (HTC files). HTC files are a proprietary feature of Internet Explorer which bundle code, markup, and style information for CSS behaviors and are used in fixes or to support added functionality.

- ICO files. ICO files are often used as favicons, but like BMP images, they are not natively compressed.

- JSON. A subset of JavaScript, JSON is used for API calls as a data format wrapper.

- News feeds. Both Atom and RSS feeds are XML documents (see below).

- Plain Text. All plain text files should be compressed, and they come in many forms, such as LICENSE, Markdown, and README files.

- Robots.txt. Robots.txt represents a major opportunity to enable compression HTTP because it can consume massive bandwidth unbeknownst to human users and become very large as it is repeatedly accessed by search engine crawlers. This specific text file tells search engines where to crawl on web sites. Since Robots.txt does not appear in JavaScript-based web analytics logs and is not usually accessed by humans, it is often forgotten.

- SVG images. Serve SVG files with HTTP compression. SVG images are just XML documents, but they are not natively compressed, and because they have a different file extension and MIME type, it’s easy to remember to compress XML documents, and forget SVG images. It’s also easy to unwittingly have SVG images on a website.

- XML. XML is structured text used for API calls as a data format wrapper or in standalone files such as Google’s sitemap.xml or Flash’s crossdomain.xml.

Avoid using HTTP compression on natively compressed content for two reasons. First, there is a cost of using HTTP data compression schemes. If there is no achievable compression to be had, it’s wasted CPU effort.

Worse, applying HTTP compression to something that’s natively compressed won’t make it smaller—and might do just the opposite by adding compression dictionaries, headers, and checksums to the response body. More comprehensive platforms can monitor in real time to check HTTP compression performance alert users for wasted CPU time during HTTP compression, or the problem of making files larger, and some include specific HTTP compression test capabilities. Both issues can typically be fixed by fixing a problem with HTTP compression web configuration.

Next, review how your web server is configured to compress content. Most browsers allow users to specify either a list of MIME types or a list of file extensions to compress, or both. Review this list and carefully configure the server carefully based on what you find. Ensure that an error such as a missing MIME type declaration or file extension won’t allow uncompressed content to leak through.

To sum up:

- Be sure to serve all non-natively compressed content using HTTP compression

- Never waste CPU cycles, load time, and bandwidth compressing already compressed content

- Ensure compatibility by sticking to Gzip

- Verify that HTTP compression settings match application content and structure reflected in code and server configuration

- Validate that the configuration works using tools

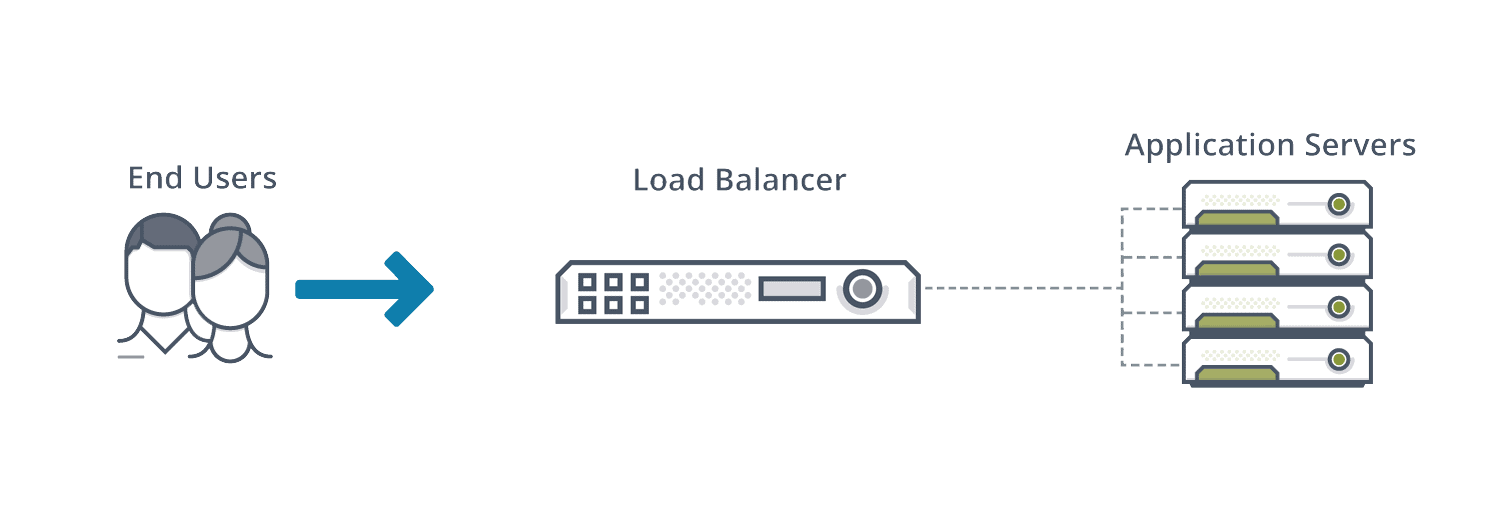

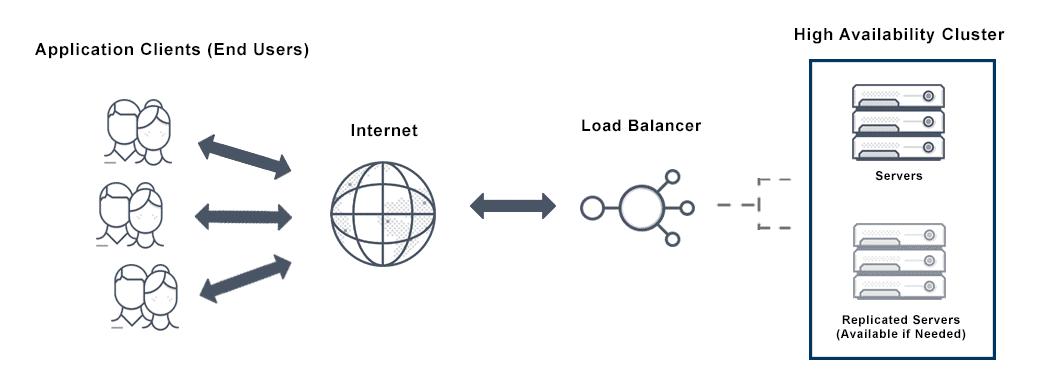

Does The VMware NSX Advanced Load Balancer Offer HTTP Compression?

Yes. The VMware NSX Advanced Load Balancer offers two options for HTTP compression: normal compression and aggressive compression.

VMware NSX Advanced Load Balancer’s normal compression option uses Gzip level 1, and consumes fewer CPU cycles as it compresses 75% of the text HTML content. The VMware NSX Advanced Load Balancer’s aggressive compression option uses Gzip level 6 and compresses 80% of the text content.

The VMware NSX Advanced Load Balancer also offers two compression modes. In auto mode, VMware NSX Advanced Load Balancer selects the optimal setting for compression, dynamically tuning the settings based on available SE’s CPU resources and clients.

Custom mode gives users more granular control over who should receive what level of compression, and enables the creation of custom filters with different levels of compression for different clients. For example, create filters for slower mobile clients with aggressive compression levels ensuring compression is disabled for faster clients on the local intranet.

Learn more about HTTP compression and VMware NSX Advanced Load Balancer here.

For more on the actual implementation of load balancing, security applications and web application firewalls check out our Application Delivery How-To Videos.