Enterprise Application Security Definition

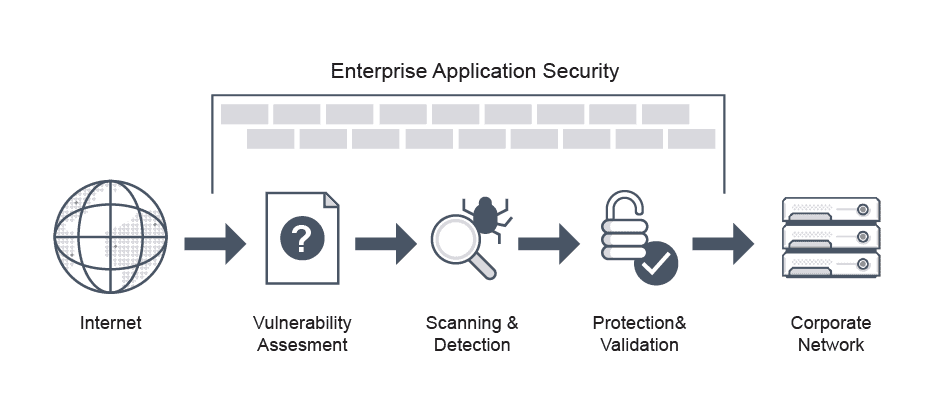

Enterprise application security, or enterprise level appsec, is the process of securing applications to prevent breaches. The basics of enterprise application security involve measuring how severe vulnerabilities are with CVSS scores, and implementing risk response protocols and patch management.

According to the Open Web Application Security Project (OWASP), the most critical risks and common security vulnerabilities include broken access control, code injection, cryptographic failures, and security misconfiguration.

To prevent these and other risks, enterprise application security best practices include threat modeling to identify potential vulnerabilities and risk assessment to assess the likelihood of particular types of attacks.

Enterprise Application Security FAQs

What is Enterprise Application Security?

Enterprise-grade application security threats may be device-specific, network-specific, or user-specific.

Device-specific threats. Many device-specific threats to enterprise application security exist. BYOD personal devices used for work or other connected personal devices on the enterprise network represent a point of threat. Insecure applications and OS vulnerabilities can be a port for malware injection. Third-party applications such as Facebook Messenger or WhatsApp are also threat sources for iOS organizations.

Network-specific threats. These place all users and devices at risk. Unsecured network connections such as WiFi can expose all connected devices on the network to cyber attacks and threats to supply chains. Using VPNs can mitigate some damage, but network monitoring systems and malware protection are preferred.

User-specific threats. Cyber attacks may arise from both negligent and malicious employees. Even unwittingly, negligent employees can put the organization at risk by clicking on suspicious links, revealing confidential credentials, falling for phishing attacks, and other errors.

Types of App-Specific Enterprise Application Security Threats

Awareness of potential app-specific threats may help mitigate against them. Here are some of the common types of app-specific enterprise application security threats:

Injection flaws. Hackers inject malicious queries into database systems to extract information or corrupt the database.

Broken authentication. A broken authentication system is vulnerable to brute force attacks.

Exposed sensitive data. Passing sensitive data like authentication credentials and credit card information unencrypted can lead to phishing.

Security misconfiguration. Unsecured default and incomplete configurations, and open cloud storage are technically misconfigurations that can cause hacks.

Unsecured deserialization. Applications that deserialize, or convert data into objects, malicious or untrusted data, leave the system vulnerable to injection of malicious serialized Java objects.

Component vulnerabilities. Devices or components with known vulnerabilities expose IT infrastructure to attacks.

Enterprise Application Security Requirements

The SAMM (Software Assurance Maturity Model) framework of the Open Worldwide Application Security Project (OWASP) offers open source tools for prioritizing application security development. Organizations can follow SAMM as a guideline for operational assessment during the secure software development lifecycle (SSDLC).

The SAMM toolset helps teams produce graphical representations of the existing enterprise application security capabilities. Using this, the team can then assess how they perform security testing, manage threat modeling, conduct secure code reviews, and manage bugs to resolution.

Although some organizations begin with dynamic application security testing (DAST) just before release, this can lead to delays and extra work for developers. Analyzing the code as it is written in static form speeds the process and reduces workload.

An over-dependence on either static or dynamic testing or either/or approach to application security is not likely to work for most enterprise-level organizations. Without a more holistic enterprise application security approach in place at every stage, CISOs receive application penetration test results too late in the development lifecycle and return with these findings to teams that are poorly-versed in security, and poorly-situated to fix vulnerabilities.

Organizations that focus solely on post-development testing will almost certainly allow some defects to slip through. Given the tremendous pressure software teams under deadline face, this is especially troubling if widespread flaws are identified or major changes are needed.

The best approach is to deploy enterprise application security software throughout the process. Test early and test often with static application security testing (SAST) during coding and DAST later to catch errant flaws. Both should be followed up with design risk analysis, enterprise application security architecture risk analysis, evaluation of security metrics, and other more mature SDLC testing.

Enterprise Application Security Models

The National Institute of Standards and Technology (NIST) cybersecurity enterprise application security framework or model is a powerful set of guidelines that help organizations develop and improve their cybersecurity posture. The framework sets forth recommendations, rules, guidelines, and standards for identifying, detecting, and preventing cyber-attacks for use across industries and is the gold-standard for creating cybersecurity programs.

The framework categorizes all cybersecurity capabilities, daily activities, processes, and projects into these 5 core functions: identify, protect, detect, respond, and recover.

Enterprise Application Security Best Practices

There are a number of best practices that are part of enterprise application development security:

Adopt the OWASP Top 10. To minimize risk to web applications and produce more secure code, organizations should adopt the OWASP Top 10.

Implement a secure software development lifecycle (SDLC). Integrate security into each aspect of the existing development process, including with more secure software, a more secure design with improved coding processes, and significantly reduced costs as a result of early detection and mitigation of vulnerabilities.

Educate stakeholders. Human users are frequently the weak point or source of breaches. Educated users on the use of multi-factor authentication, antivirus programs, VPNs and firewalls, and the danger of shared passwords and other secrets.

Conduct regular code reviews. Conduct penetration testing annually based on a well-defined scope and a snapshot of the system at a specific point of time.

Implement a strict access control policy. Give IT admins centralized control over organization-wide access and restrictions to networks, devices, and users.

Force strong user authentication. Most data breaches are caused by weak passwords and compromised credentials. The IT team should enforce strong user authentication with access control and policy tools.

Encrypt all data. Unencrypted data is vulnerable to phishing, exploitation, and extraction. In-transit data should be secured using SSL with 256-bit encryption. Protecting stored data with encryption and application-level access control can prevent data exploits.

Update just in time. Time updates to software, firmware, and applications with a proper process. Release the update first in the test environment, adjust for any breakdowns, and only roll out the update across the organization in pieces.

Identify all points of vulnerability. Document all elements in the IT ecosystem, including applications, hardware, and on-premise and cloud-based network elements to improve monitoring and tracking and create transparency.

Make security part of the business lifecycle. Security analysis, repair, testing, and evolution should be part of the business lifecycle. Training and drills for employees as well as tests for software, applications, and hardware should all be part of the IT team’s ongoing tasks.

Does Avi Offer Enterprise Application Security Services?

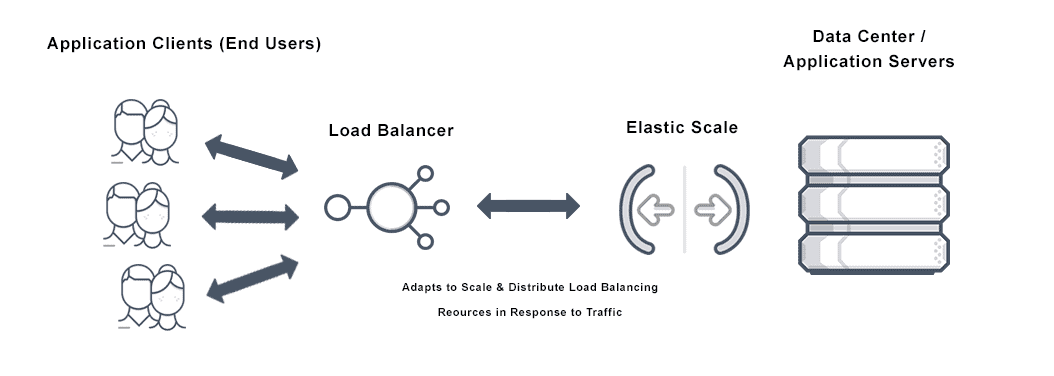

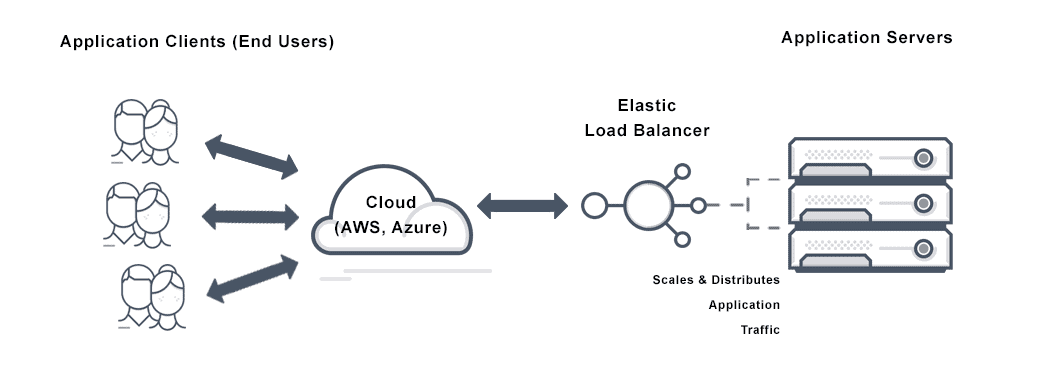

With Avi Networks consistent, secure enterprise application delivery is easy. One application services platform can frictionlessly deploy any app, anywhere.

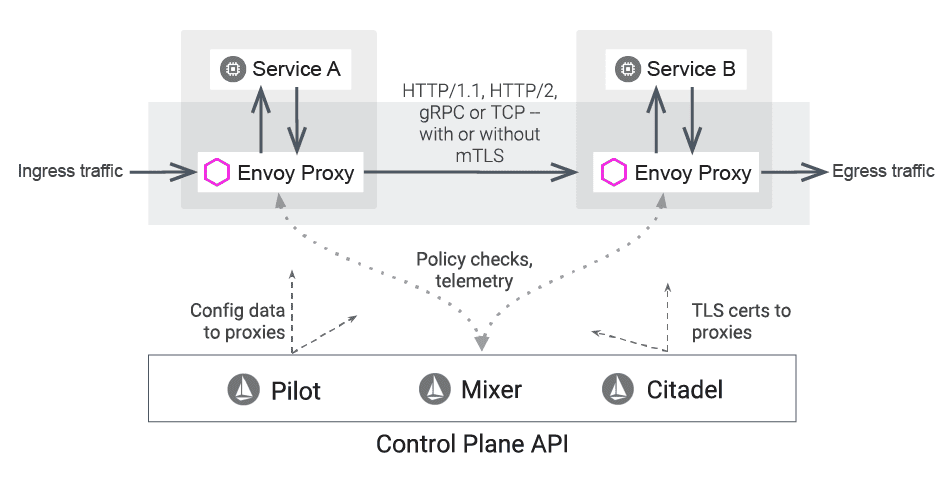

The VMware NSX Advanced Load Balancer (Avi) delivers multi-cloud security to protect applications and microservices from today’s threats and improve ingress control. Avi’s comprehensive solution provides network and application security with a context-aware web application firewall (WAF) to protect against all forms of digital threats. Visibility through advanced analytics and security insights helps customize a comprehensive enterprise application security policy per application, microservice, or tenant.