Envoy Definition

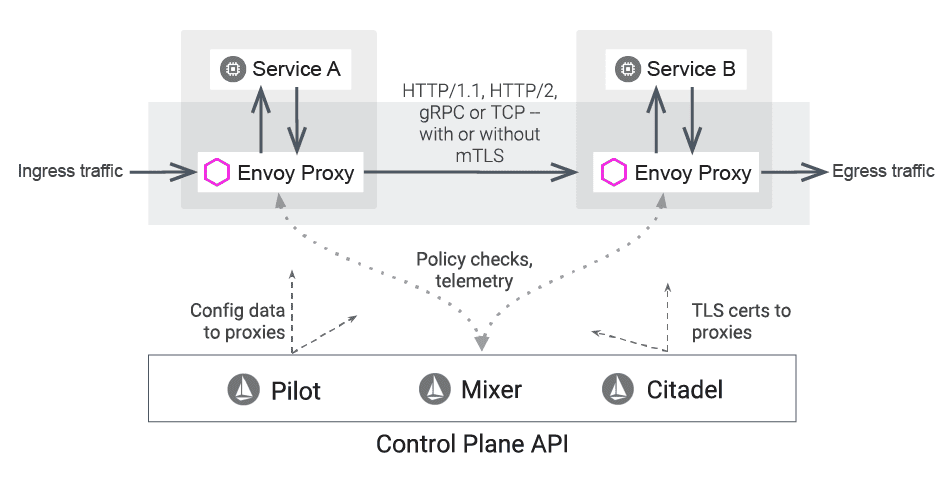

The Envoy proxy is an extended version of an Istio ingress controller, and for this reason is sometimes thought of as an Envoy ingress controller. The lone Istio component to interact with traffic on the data plane, the Envoy high-performance proxy was developed in C++ and designed to moderate inbound and outbound services for the Istio service mesh. Envoy is an open-source edge and service proxy.

Envoy FAQs

What is Envoy?

The two main challenges that present when organizations move toward microservices and distributed architecture are networking and observability. The service mesh Envoy was created at Lyft to cope with these challenges.

The high performance C++ distributed Envoy Proxy was designed both for single applications and services and as a universal data plane and communication bus for large microservice architectures. Similar to both hardware and cloud load balancers, Envoy runs in a platform-agnostic way and abstracts the network, offering common features and service traffic in a visible infrastructure flow of Envoy mesh. This strong service mesh is logically most comparable to a software load balancer.

Although there are various traditional and tested L4 and L7 proxies such as NGINX and HAProxy, Envoy has several additional benefits:

- Developed for modern microservices

- Translates between HTTP-2 and HTTP-1.1

- Proxies any TCP protocol

- Proxies all raw Envoy data, databases, and web sockets

- SSL enabled by default

- Built-in dynamic service discovery and load balancing

- Dynamic configuration of Envoy network, adding of hosts, mapping of requests from clients to services

Weighted round-robin. Selects each available upstream host in round-robin order.

Weighted least request. Load balancer selects different algorithms based on weights.

All weights equal. An algorithm selects the host which has the fewest active requests based on a configuration.

All weights not equal. Load balancer shifts to weighted round-robin schedule and dynamically adjusted weights based on request load at the time of selection when multiple hosts in the cluster have different load balancing weights.

Ring hash. The load balancer consistently implements hashing upstream to hosts based on a value.

Maglev. The Maglev load balancer implements consistent hashing upstream to hosts to generate a searchable table based on either minimal disruption to the table or protocol routing rules to hash on.

Random. The random load balancer may offer better performance compared to round-robin when there is no Envoy health check policy in place.

Envoy Benefits and Features

Users can enforce policies based on service identity with Istio Envoy. Envoy proxies deploy as sidecars next to services, using built-in features such as staged rollouts with %-based traffic split to logically augment those services. Envoy sidecars abstract the network from the core business logic.

Envoy provides an advanced load balancing mechanism for distributed applications because it is a proxy rather than a library. Envoy can also be used as a network API gateway.

Envoy API traffic management capabilities include resiliency features such as timeouts, automatic retries, load balancing, circuit breakers, coarse and fine grained limiting, observability and metrics, rate limiting via an external rate-limiting service, outlier detection, request shadowing, etc. Robust Envoy metrics about traffic reveal insights for users with tools such as Grafana.

Envoy is self-contained and platform-agnostic, offering high performance despite a small memory footprint. Envoy proxies form a mesh outside of the application that is transparent, out of process architecture.

The Envoy architecture offers various benefits over the traditional library approach. Envoy’s strong service to service communication works in any language, including C++, Go, Java, Python, PHP, etc. This allows Envoy to control all network communication between users and platforms transparently, upgrade dynamically, and makes it simpler to deploy across a distributed structure.

Envoy is a network proxy at L3 and L4, meaning it facilitates communication in the network and transport layers. Envoy supports HTTP L7 filter layer tasks, including rate limiting, buffering, sniffing Amazon’s DynamoDB, routing/forwarding, a raw TCP proxy, serving as an HTTP proxy, a UDP proxy, etc.

Envoy supports protocols for upstream and downstream communication and translation of communication between protocols, HTTP/1.1, HTTP/2, and HTTP/3. Envoy also supports gRPC.

Envoy service discovery involves a layered set of dynamic configuration APIs that provide dynamic updates such as backend clusters, cryptographic items, host information, HTTP routing rules, and listening sockets. Static Envoy configuration files can replace some layers for simpler deployment.

In HTTP mode, Envoy can redirect requests based on parameters such as authority, content type, path, runtime values, and other factors. This allows users to deploy Envoy as a front/edge API gateway.

The Envoy health check allows users to automatically perform active checks of all the cluster services based on health check data and discovery.

Envoy Use Cases

An Envoy sidecar in Kubernetes has a variety of uses, but here are two common ones:

Handle service-to-service communications with an Istio Envoy sidecar. An Envoy sidecar proxy can also enable communication among services as an L3/L4 application proxy if the Envoy proxy instance has the same lifecycle as the parent application. This allows users to extend applications across various technology stacks.

Use Envoy proxies to route traffic as an API gateway. The Envoy proxy accepts inbound traffic as a front proxy sitting between the application and the client request, collating request information and directing it as needed inside a service mesh. The Envoy proxy offers authentication, load balancing, traffic routing, and monitoring at the edge. Envoy monitoring system capabilities are intended to offer granular visibility and greater actionable insight.

Leverage Envoy to achieve scale. Achieve faster response times by routing requests to a read-only cluster through a graded configuration on Envoy.

How to Use Envoy Proxy in Kubernetes

Kubernetes, Envoy, and Istio are related open-source technologies that manage distributed systems.

Kubernetes or K8s is a container orchestration platform. Kubernetes manages clusters of instances and deploys containerized applications. In Kubernetes, service pods hold Envoy docker containers. Envoy proxy kubernetes capabilities facilitate load balancing, scaling, and persistent storage, and enable the platform to operate as a sidecar. Envoy sidecars in kubernetes run in front of each service instance in front of every container and are reverse proxies that provide all non-business-logic services such as encryption and security.

Envoy is the service/edge proxy described within this glossary.

Istio is a service mesh or fabric that is built or layered on top of a platform like Kubernetes or Envoy. It offers a uniform way to manage, connect, secure, coordinate, and observe services and sidecars for them.

Working together, these technologies allow for more efficient and secure management and orchestration of distributed applications. Highly sophisticated, enterprise-scale businesses are now implementing their IT architecture entirely as service mesh that can independently and rapidly heal failures, reroute traffic, and shift loads across any region or cloud service provider without any human intervention.

Find Envoy documentation here.

Envoy Proxy Alternatives

There are a few alternatives to Envoy, NGINX, Avi Networks, HAProxy, and Rust Proxy among them. Read on to learn why Avi is the best choice—as an alternative to the entire service mesh.

Does Avi Offer an Envoy Proxy Alternative?

Yes. As part of a distributed microservices architecture, cloud-native applications often run in containers. Kubernetes deployments are the de-facto standard for orchestration of these containerized applications.

Exponential growth creates sprawl, an unintended outcome of microservices architecture. This spiraling growth presents numerous challenges inside a Kubernetes cluster, including encryption, routing between multiple versions and services, authentication and authorization, and load balancing.

Building on Kubernetes allows the service mesh to abstract away how inter-process and service to service communications are handled, as containers abstract away the operating system from the application.

Avi integrates with the Tanzu Service Mesh (TSM) which is built on top of Istio with value added services. By expanding on the TSM solution, Avi offers north-south connectivity, security, and observability inside and across Kubernetes clusters, and multiple sites and clouds. In addition, enterprises are able to connect modern Kubernetes applications to traditional application components in VM environments and clouds, secure transactions from end-users to the application, and seamlessly bridge between multiple environments. Avi also offers WAF protection that is far more comprehensive than Envoy WAF capabilities.

For more on the actual implementation of load balancing, security applications and web application firewalls check out our Application Delivery How-To Videos.