Traffic Shaping Definition

Traffic shaping is a computer network bandwidth management technique that delays some or all datagrams in line with a traffic profile to improve latency, optimize performance, or increase usable bandwidth for certain types of packets by delaying other types. Also called packet shaping, traffic shaping switches, rules, and policies all prioritize traffic and bandwidth to ensure a higher quality of service or QoS for network traffic related to business. Traffic shaping and policing are related but distinct practices which will be detailed further below.

Application-based traffic shaping is among the most common types of traffic shaping, in which specific traffic shaping policies are applied based on data packet identity. For example, it is typical to allocate maximum bandwidth to a business-critical application that is sensitive to latency such as a Voice-over-IP (VoIP), while throttling peer-to-peer file-sharing networks such as BitTorrent with application-based traffic-shaping.

Traffic Shaping FAQs

What is Traffic Shaping?

Traffic shaping manages bandwidth by limiting the flow of certain types of network packets deemed less important by the organization while prioritizing more important traffic streams. Traffic shaping ensures sufficient network bandwidth for time-sensitive, critical applications using policy rules, data classification, queuing, QoS, and other techniques.

Bandwidth throttling is the regulation of traffic flow into a network. Rate limiting is the control of the outward traffic flow. Typically, traffic shaping measures a committed information rate (CIR) in bits per second (bps). It then buffers any non-essential traffic that exceeds the average bit rate.

By controlling the amounts of data that flow into and out of the network, the organization can leverage traffic shaping to enhance network performance. Network policies categorize, direct, and queue traffic. This works like classifying priority packages for delivery without delay; slowing packet transmission for less critical applications allows proper bandwidth management and function of mission-critical applications such as those involving VoIP.

Another apt analogy is the security for a high-end event. The security team has a list of A-list guests that get past the red rope without waiting, every time. Everyone else has to queue up.

The traffic shaping appliance acts as the security team, and it treats only packets related to mission-critical applications as A-list guests. As long as the event has plenty of room, anyone can come in—but if things get crowded, ordinary packets are going to queue up and wait so important applications will not experience latency, and they may also be removed.

Latency can rise substantially if a resource becomes over-utilized to the point of congestion. Traffic shaping can prevent latency via bandwidth throttling, rate limiting, or more complex rules or algorithms. In fact, there are many reasons and means to achieve this control; however, delay of packets is always central to traffic shaping.

Traffic shaping may be applied by a network element as part of a router or by the traffic source, such as a network card or computer. However, the most commonly seen type of traffic shaping is control of traffic entering the network, applied at the network edges.

At times, traffic sources apply traffic shaping to ensure their sent traffic complies with a contract which a policer could potentially enforce in the network. Teletraffic engineering is another common use for traffic shaping, which is part of several Internet Traffic Management Practices (ITMPs) in domestic ISPs’ networks. Data centers also use traffic shaping to ensure that they host their tenants on the same physical network and maintain service level agreements for their various tenants and applications.

Why is Traffic Shaping Needed?

Network bandwidth is finite by definition, so bandwidth management and traffic shaping are essential to improved delivery of time-sensitive data and performance of higher priority applications.

Traffic shaping is a flexible yet powerful way to defend against bandwidth-abusing distributed denial-of- service (DDoS) attacks while ensuring quality of service. It regulates abusive users, guards applications and networks against traffic spikes, and stops network attacks from overwhelming network resources.

Transitioning from a high speed interface to a low speed interface can cause egress blocking: tail drop or packet loss in the outgoing queue. Prevent this issue by configuring egress traffic shaping to ensure all packets are eventually sent, at least until the buffer fills.

There are four basic factors that affect network quality: delay, bandwidth, packet loss, and jitter. These factors are typically not problematic in a local area network or LAN, where bandwidth is not at issue and all devices are in close proximity.

These networks merely require wireless connections or cables with sufficient capacity to handle network traffic. The price of devising this type of one-off network is generally manageable.

Wide area networks or WANs spread across distances and public networks such as the internet pose a challenge, particularly with respect to these four network quality factors. This is because the cost of infrastructure to connect to either WANs or public networks is both high and recurring.

It is important to retain bandwidth for voice, so use some traffic shaping device in any location with VoIP phones. This includes your main office, data center, and any home offices or other satellite locations.

The weakness in the standard application-based approach is that many application protocols attempt to circumvent application-based traffic shaping using encryption. Route-based traffic shaping policies can prevent applications from bypassing them by applying packet regulation policies based on the intended destination and source of the packet.

Traffic shaping is critical for cybersecurity and a key element of any network firewall. It ensures higher quality of service for data and business applications, and regardless, limited network resources make bandwidth prioritization a requirement.

Traffic Shaping and Quality of Service

Traffic shaping does not come into play when traffic arrives at a normal rate. Manageable traffic rates mean the system is running smoothly to start with. However, traffic that is arriving faster than that configured rate will be delayed in a buffer until it can safely be sent out below that configured rate.

This has to do with achieving quality of service. Quality of service or QoS thinking acknowledges that the four factors that affect network quality impact different applications in different ways, based in large part on user requirements. QoS strategies are therefore flexible in assessing a “best” level of service for various kinds of applications.

Voice or VoIP applications are a good example, because although voice traffic packets do not require much bandwidth, voice does not tolerate packet loss or delay. This is why just a second or two of a lost meeting or conversation is so annoying—especially repeatedly, in a business setting.

In contrast, bandwidth is the most salient factor when downloading a large file over a TCP connection, because TCP can retransmit packets to make up for packet loss.

For proper QoS network implementation, there are several important considerations:

Traffic Classification

Analyze the types of applications and traffic running on the network to more appropriately apply traffic shaping rules to different traffic classes. Identify mission-critical traffic such as traffic to and from an application server, and define how to match that traffic.

Marking

Classified traffic should be marked with tags to make it simple for all devices in the traffic path to apply required policies. There are common tags that device manufacturers rely on to build default profiles for different traffic classes, such as MPLS Experimental bits, Differentiated Services Code Points (DSCP) bits, and IP Precedence (IPP) bits.

QoS Policies

Traffic classification and marking have as their goal the consistent application of QoS policies to the packets. Some common QoS policies related to traffic shaping include congestion management and queuing, congestion avoidance, for example weighted random early detection, and traffic policing.

Traffic Shaping vs Policing

Traffic shaping and policing are often confused, but they are important to distinguish.

Traffic policing works in bursts, remarking or dropping excess traffic only when the traffic rate reaches the maximum rate configured in the system. In other words, it switches on like a thermostat once the traffic rate exceeds what it should. If you graph the look of traffic policing, you see a jagged looking saw edge, but with blunt spikes where the traffic policing remarks the traffic past the limit.

This is why traffic policing is less ideal for applications like VoIP. Since the excess data packets are simply dropped when the traffic reaches the maximum rate, the uneven data flow often means a poor user experience.

Traffic shaping works constantly over time toward a smoothed goal for traffic output. In contrast to traffic policing, traffic shaping retains excess packets in a buffer or queue rather than remarking them. It then transmits the excess packets over time as traffic permits, producing that smooth curve.

Traffic shaping implies and demands a sufficient buffer or queue for the delayed packets; traffic policing has no such requirement. Traffic shaping thus demands memory to enable.

Another consideration is that queueing packets up to be sent later—traffic shaping—can only ever apply to outbound traffic. True inbound traffic shaping does not exist, because inbound traffic is the realm of traffic policing. Traffic shaping also demands a scheduling function so delayed packets can be transmitted later from different queues.

Because traffic shaping and traffic policing are two entirely different techniques, the user should determine which will work best in each situation.

Traffic Shaping Techniques

The right traffic shaping technique depends on the types of traffic on the network. Categorization of those varieties of traffic is a critical first step in this process, one that defines traffic shaping techniques.

Default traffic shaping techniques often intercept all packets when they arrive at the packet shaper and store them in a buffer for processing. High priority packets transmit immediately, while less important packets remain buffered. Sometimes, if the buffer is full, traffic shaping merely drops arriving packets.

However, there are two more specific traffic shaping techniques in frequent use as well.

Application-based Traffic Shaping

The application-based traffic shaping technique prioritizes the data packets of some applications over others—for example, the voice data packets of a VoIP over the basic data packets of a browser. This kind of traffic shaping technique would hold the browser packets until all VoIP packets were sent, ensuring less latency for the critical app.

However, application-based traffic shaping techniques can also reduce bandwidth usability. They are also vulnerable to being circumvented by encryption.

Route-based Traffic Shaping

The route-based traffic shaping technique seeks to prevent applications from circumventing application-based traffic shaping policies. It does this by focusing instead on the actual source and intended destination of the packets so disguising them with encryption is less useful.

Benefits of Traffic Shaping

Using a network traffic shaping device or software has many benefits:

- Accelerates network traffic

- Adding bandwidth as an alternative is expensive

- Avoids network congestion by detecting consumption of abnormal bandwidth

- Blocks attacker IPs

- Frequently necessary to meet the service-level agreements (SLAs) of contracts

- Enhances application performance

- Ensures high quality of transmission for critical applications

- Guarantees tiered-service level offering to end users

- Internet Traffic Management best practices recommend traffic shaping

- Limits unwanted traffic

- Maximizes resource/higher priority app utilization and allocation of bandwidth

- Necessary for network firewall

- When network interfaces are overloaded by non-essential traffic, it causes jitter, delays, and packet loss for real-time voice streams

Traffic shaping helps to ensure critical data and business applications run efficiently with the bandwidth they require. Ultimately, traffic shaping helps ensure better quality of service (QoS), deliver higher performance, maximize usable bandwidth, reduce latency, and increase return on investment (ROI).

How to Implement Traffic Shaping

All traffic shaping solutions implement traffic shaping techniques a bit differently, but there are some basic factors to be aware of:

- Rate of transmission, how fast the network is actually capable of sending bits per second

- ISP bandwidth, how many bits per second the system can handle

- Committed information rate (CIR in bits per second), the goal rate of transmission for traffic shaping

- Burst size (in bits per second), the number of bits needed to be sent at once to maintain the CIR

- Time intervals in seconds, the frequency of transmission

Committed information rate or CIR is the same as burst size divided by time interval. To calculate the CIR in bits per second, divide the burst size in bits per second by the time interval in seconds.

A traffic shaper delays metered traffic to ensure that each packet complies with the applicable traffic contract. It may implement metering with a number of traffic shaping rules or algorithms, for example the token bucket or leaky bucket. There is one FIFO buffer for each class of metered packets or cells shaped separately, and the excess packets are retained there until the system can transmit them and remain in compliance. Transmission can happen immediately (when traffic is already under the limit), after a delay (until the scheduled release time) or never (should packet loss occur during buffer overflow conditions).

Buffer Overflow Conditions

All buffers in traffic shapers are finite, so implementations must include strategies for full buffers. Tail drop is a common and simple approach which involves simply dropping traffic upon arrival at a full buffer. This results not only in traffic shaping, but also in traffic policing. A more sophisticated approach might apply a random early detection algorithm or other dropping algorithm.

Traffic Classification

More basic traffic shaping implementations uniformly shape all traffic. More sophisticated traffic shaping techniques classify traffic first based on protocol or port number, for example. Then, a particular desired effect can be achieved by shaping various traffic classes separately.

Self-limiting Sources

Self-limiting sources generate traffic which never exceeds an upper limit. In other words, self-limiting sources shape the traffic they generate to some degree because they cannot transmit faster than their encoded rate allows.

Traffic Shaping Features

The best traffic shaping software packages, tools, and other solutions share certain features:

Logical Bandwidth Separation Capability

It is critical to be able to logically analyze and separate traffic into categories and divide the amount of bandwidth available between them. This is important to enable separate flows of traffic for distinct subnets or user groups, or for ingress traffic shaping, which separates many locations with different bandwidth demands behind a single point of ingress.

Intuitive Policy Management

Traffic shaping policies should be predictable and intuitive, so that administrators can be confident when they apply them they will see the desired outcomes.

Flexible Bandwidth Configurations

Administrators need the flexibility to create the combination and number of bandwidth rules that meet organizational needs.

Dynamically Adjustable

To better accommodate work from home, remote workforce, and bring your own device (BYOD) trends, bandwidth must adapt dynamically as new devices and hosts join the network. Some organizations may need to devise rules for fair bandwidth sharing, cap the number of devices, or cap bandwidth for each individual device.

Does Avi Offer Traffic Shaping?

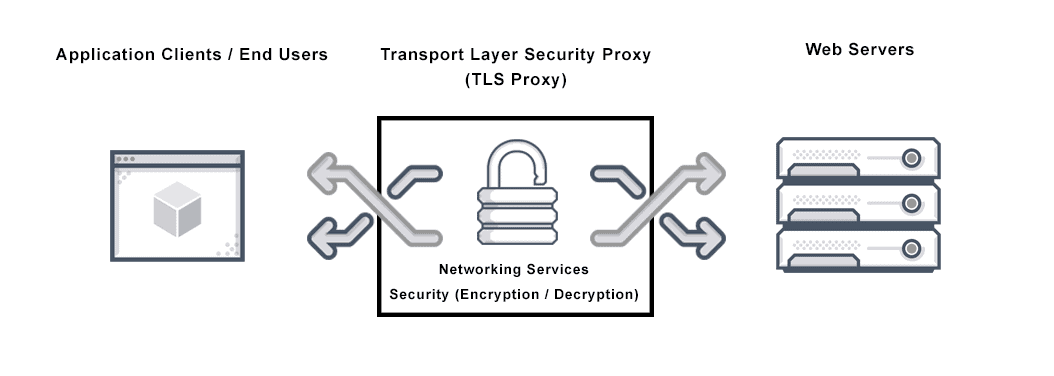

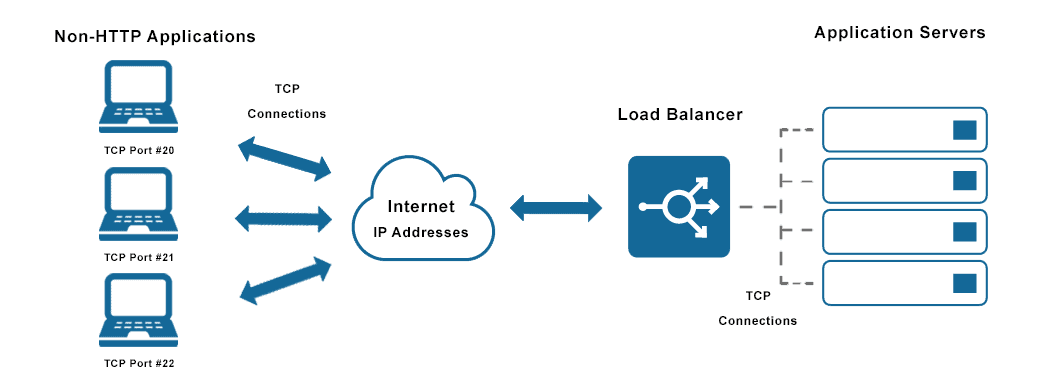

Yes. Avi’s flagship product Avi Platform delivers application services including distributed load balancing, web application firewall, global server load balancing (GSLB), network and application performance management across a multi-cloud environment. As such it offers significant traffic shaping, throttling and rate-limiting options. These can be applied at different levels – from a virtual service to pool/server level or at the client level.

For example, Avi can limit the number of TCP connections to a specific virtual service. This ensures that the service cannot be compromised and used to spawn excessive connections or that a poorly coded service does not create unanticipated behaviors. Equally, Avi can limit the maximum bandwidth a specific service is allowed which ensures lower priority services with bursty bandwidth needs such as a backup, do not compromise high latency services such as VoIP calls.

Limits can also be set on the number of connections that can be made to an individual server, and even the maximum number of connections to a server pool. This can be used to prevent denial of service attacks that subject servers to an overload of requests.

Traffic shaping can be applied at the client level – meaning that the maximum number of connections from a single client can be capped – preventing it slowing performance for others on the network. Limits can be set for all clients connecting to a specific URL, which can also help prevent DDoS attacks.

The primary role of an ADC is load balancing, but it can also offer traffic shaping, application acceleration, caching, compression, content switching, multiplexing and application security.

For more on the actual implementation of load balancing, security applications and web application firewalls check out our Application Delivery How-To Videos.