Istio Definition

Istio is an open source service mesh solution organizations use to run microservices-based, distributed applications anywhere. Istio aggregates telemetry data, enforces access policies, and manages traffic flows—without changing application code.

Istio networking eases deployment complexity by transparently layering onto distributed applications to speed modernization. The Istio platform enables organizations to readily connect, secure, control, and monitor microservices architecture.

Istio FAQs

What is Istio?

What is Istio service mesh? Istio is an open source service mesh that forms a transparent layer atop distributed applications. Istio is a complete platform, including APIs, that is capable of integrating with most policy, telemetry, or logging systems.

Service mesh refers to the network of microservices that includes both applications and interactions between them. As a service mesh increases in complexity and size, it can become more challenging to manage and even comprehend.

Requirements for service meshes can include discovery, failure recovery, load balancing, metrics, and monitoring. More complex operational requirements might include A/B testing, access control, canary rollouts, end-to-end authentication, and rate limiting. Istio service mesh solutions are part of a language-independent, transparent, modernized service networking layer that provides these functions.

What is Istio in Kubernetes—and is it different from any other Istio deployment? The key to understanding Istio Kubernetes compatibility and the Istio architecture is to understand how Envoy and Kubernetes function together. It’s not a question of Istio vs kubernetes—they often work in tandem to ensure a containerized, microservices-based environment operates smoothly.

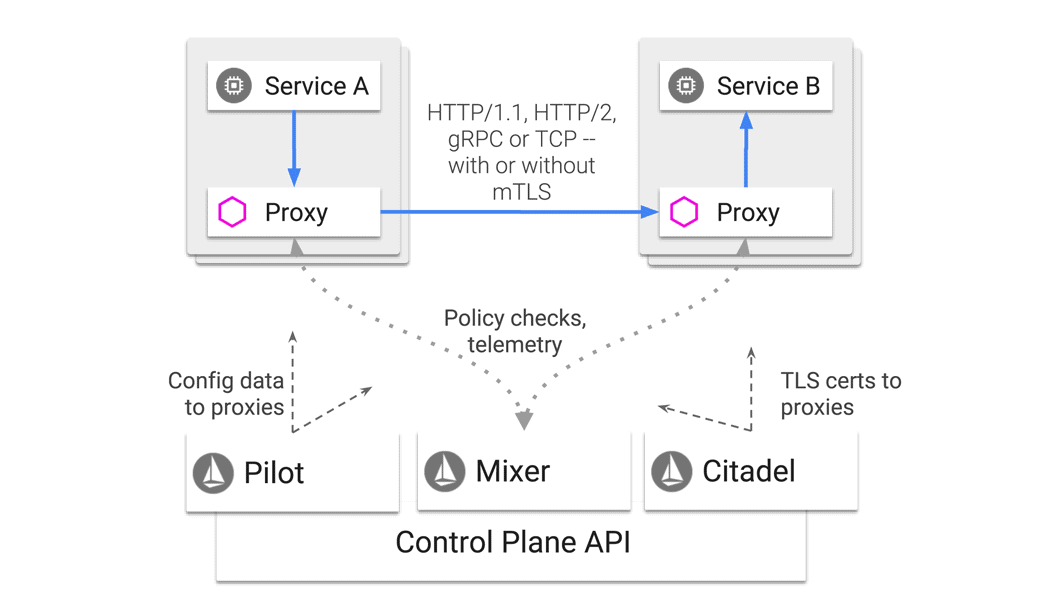

For example, service mesh solutions such as Istio are made up of both a data plane and a control plane. An extended version of Envoy manages all inbound and outbound traffic and serves as the data plane for the Istio service mesh.

In contrast, Kubernetes is an open source platform that automates and orchestrates many of the manual processes involved in scaling and deploying containerized applications. Although Istio is platform agnostic, developers often use Istio and Kubernetes together.

In this way, Kubernetes, Envoy, and Istio are all related tools organizations use to manage distributed systems.

Istio Features

Here are a few of the Istio advantages and popular features most users cite:

Visibility. Straightforward rules configuration and traffic management simplify configuration of service-level properties such as timeouts, retries, and circuit breakers. Istio also makes critical tasks such as canary rollouts, A/B testing, and staged rollouts easier. Improved visibility into traffic and out-of-box failure recovery and Istio fault tolerance features allow for more reliable, robust networks under various conditions.

Security. Istio security capabilities empower developers to work on security at the application level and consistently enforce policies by default across diverse runtimes and protocols with few or no changes to the application. Istio provides the secure, foundational communication channel, and manages authorization, authentication, and encryption of service to service communication at scale.

Observability. Istio performance monitoring offers monitoring, tracing, and logging capabilities. Istio monitoring features customizable dashboards that offer insight into how service performance is affecting other processes. The Mixer component collects telemetry data from the Envoy proxy and provides policy controls, offering Istio operators granular control over the mesh, the infrastructure backends, and their interactions.

Istio Architecture. Istio security architecture consists of three components:

- The open source Envoy Proxy that manages security and connections. Envoy proxies are typically deployed to support the microservices applications within Kubernetes clusters as sidecars;

- The Istio data plane, which consists of all Envoy proxies running beside cluster applications;

- The Istio control plane, which manages the Envoy proxies in the data plane.

Istio Load Balancer. Istio uses a round-robin policy by default.

Istio Fault Injection. To improve resiliency and traffic management, users can inject faults and test Istio using their method.

Istio Autoscaling. Find the Istio autoscaling rules and policy for K8s here.

Istio Service Entry. This describes the service properties such as protocols, ports, DNS name, VIPs, etc.

Envoy Proxy. To extend Istio performance and capabilities, the system uses Envoy proxy and many of its built-in features, including: TLS termination, HTTP/2 and gRPC proxies, staged rollouts, rich metrics, and more. The sidecar proxy model also allows you to add Istio capabilities to an existing deployment with no need to rearchitect or rewrite code. Envoy health check takes the place of a specific Istio health check, and allows users to automatically perform active checks of all the cluster services based on health check data and discovery. The proxy increases Istio’s latency.

Pilot. Pilot configures and runs high-level routing rules controlling traffic behavior for Envoy. There is no dynamic Istio service discovery. Instead, Pilot offers service discovery for Envoy sidecars, resiliency (retries, timeouts, circuit breakers), and traffic management for routing (canary rollouts, A/B tests).

Istio Benefits

The key benefits of Istio are:

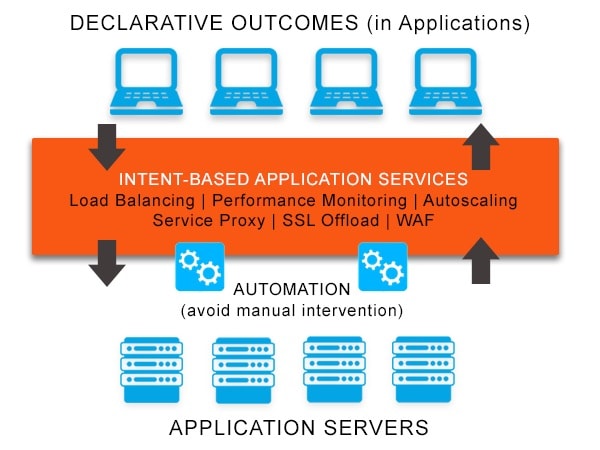

Security. Istio security best practices [found here] allow users to create secure networks of distributed services with service-to-service authentication, load balancing, monitoring, and other options, without changing service code. Istio can also be deployed behind a web application firewall (WAF).

Support. This Istio WAF or sidecar proxy configuration can offer Istio services support throughout the environment using its control plane functionality:

- Automatic load balancing for WebSocket, gRPC, HTTP, and TCP traffic

- Traffic control with routing rules, failovers, retries, and fault injection

- A configuration API supporting rate limits, access controls, and quotas

- Automatic metrics, logs, and traces including cluster egress and ingress

- Supported Istio releases found here

- Secure service-to-service in-cluster communication

Safe, secure, reliable communications. The Istio service mesh is more consistent and efficient, allowing users to avoid directly implementing desired security and connectivity behavior in each application.

Communications abstracted away from the application layer. Istio abstracts the control plane and data plane away from the applications and physical infrastructure. This makes securing, managing, and observing distributed applications simpler.

Traffic management. Istio enables traffic splitting in support of blue/green deployments, A/B testing, and canary deployments of applications.

API gateway vs Istio gateways. Istio ingress gateways are cloud- and Kubernetes-native, unlike most standard API gateway options. With Istio, ingress control is part of load balancing and service deployment.

Portability. Using the Istio system, a single service can operate in multiple environments or clouds for redundancy.

How to Use Istio

Because it is an open source platform, Istio can be licensed from a commercial provider like Solo.io, but that isn’t necessary. It can also be downloaded from community-led repositories on GitHub.

Once the platform is sourced, the user deploys the Istio control plane in Kubernetes clusters. Envoy Proxy allows users to configure Istio and operate the data plane, enforcing both North-South and East-West traffic policies.

Istio operators deploy and install Istio in Kubernetes using either Helm charts or YAML files. The Istio control plane is used to set policies, manage configurations, and perform updates.

A virtual service in Istio defines traffic routing rules and applies them. A routing rule defines matching traffic criteria for each specific protocol. The Istio virtual service responds with matched traffic to the destination service or subset defined by the registry.

Istio Alternatives

There are various alternatives to Istio or, specifically, tools that enhance or replace it. These include NGINX, HashiCorp, HAProxy, and of course Avi Networks and VMware.

Istio vs NGINX. NGINX is web server software that functions as a load balancer, Hypertext Transfer Protocol (HTTP) cache, reverse proxy, and mail proxy. NGINX can run as an ingress controller, but there is also an Istio ingress controller capability.

Consul vs Istio. HashiCorp Consul is a service networking solution for managing secure network connectivity between services and across multi-cloud and on-prem environments. Consul offers traffic management, service mesh, service discovery, and automated network infrastructure updates to devices.

HAProxy vs Istio. HAProxy is another ingress controller but it cannot run with Envoy, unlike Istio or Consul. HAProxy is also not suited for serving static files or running dynamic apps.

Envoy vs Istio. Although what is the difference between Istio and Envoy is a common question, Istio vs Envoy is a false distinction. Envoy was designed for integration with Istio. In fact, this relates back to the discussion of the difference between Istio and Kubernetes.

Read on to learn about how Istio, VMware, and Avi Networks compare and why Avi Networks/VMware together with Tanzu offer the best alternative to Istio.