VLAN Configuration Definition

A virtual LAN (VLAN) is a logical overlay network that collects a group of devices and isolates their traffic with a shared LAN or physical network in the same location. Like the foundational LAN, a VLAN typically operates on the Ethernet level or Layer 2 of the network, the broadcast domain. Layer 2 is where network devices receive Ethernet broadcast packets.

Although computers on the LAN are located on a number of different LAN segments, Layer 2 VLAN configuration ensures they communicate as if they were attached to the same wire.

A single location can have many interconnected LANs, because once traffic engages Layer 3 functions across a router, it is no longer on the same LAN.

VLANs are flexible because they are based on logical rather than physical connections. VLAN configurations partition one network into many virtual networks that can serve various use cases and meet many requirements.

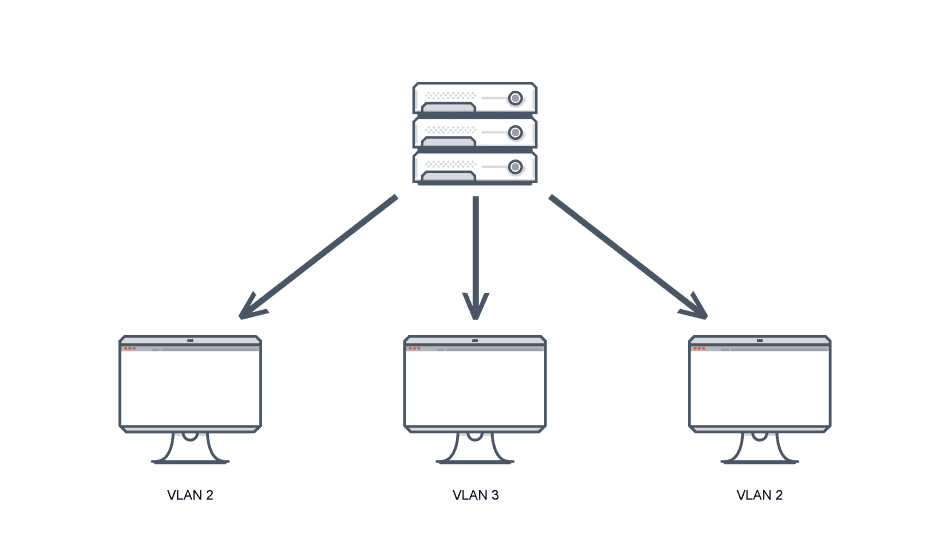

However, this also requires communication among VLANs which in turn must travel through a router. A network switch assigns VLAN membership—specific end-stations for each VLAN—to distinguish among the VLANs. Before it can share the broadcast domain with other end-stations on the VLAN, an end-station must itself achieve VLAN membership.

A VLAN database is used to store the VLAN ID, MTU, name, and other VLAN data.

VLAN Configuration FAQs

What is VLAN Configuration?

VLANs classify and prioritize traffic and create isolated subnets. These allow selected devices to operate together, whether or not they are located on the same physical LAN.

Enterprises manage and partition traffic with VLANs. An organization might separate data traffic from engineering, legal, and finance employees by adding VLANs for each department. This way, even if multiple applications have different latency and throughput requirements, they can execute on the same server and share a link.

A VLAN ID identifies VLANs on network switches. Each port on a switch has one or more assigned VLAN IDs and will receive a default VLAN if no other VLAN is assigned. Each VLAN ID is connected to switch configured ports to provide data-link access to all hosts.

In the header data of every Ethernet frame sent to a VLAN ID is a VLAN tag, a 12-bit field. IEEE defines VLAN tagging in the 802.1Q standard. Up to 4,096 VLANs can be defined per switching domain as a tag is 12 bits long.

Attached hosts send Ethernet frames without VLAN tags. The switch inserts the VLAN tag—the VLAN ID of the associated ingress port in a static VLAN, and the tag associated with the device’s ID for a dynamic VLAN.

Switches forward only to ports the VLAN is associated with. Trunk ports or links between switches accept and route all traffic for any VLAN in use on both sides of the trunk. The VLAN tag is removed at the destination switch port, before transmission to the destination device.

VLANs can be static/port-based or dynamic/use-based.

Engineers create VLANs called port-based VLANs by assigning one network switch port per VLAN. These config ports only communicate on their assigned VLANs. Port-based VLANs are not truly static, because it is possible to change their assigned access ports while in use, either manually or using automated tools.

Some VLAN use cases are more practical, such as creating segregated access to devices in an office setting. Others are more complex, such as preventing trading and retail departments of a bank from interacting or accessing each others’ resources.

Benefits of VLAN Configuration

Network engineers typically configure VLANs for multiple reasons, including:

Enhanced performance. VLANs reduce the traffic endpoints experience, improving performance for devices. They also reduce the source of hosts by breaking up broadcast domains and limit network resources to relevant traffic. It is also possible to define various traffic-handling rules per VLAN, such as prioritizing some kinds of traffic for specific business use cases.

Improved security. VLAN partitioning can also enable more control over which devices can access each other to improve security. For example, network access can be restricted to specific VLANs for IoT devices.

Reduced administrative burden. Administrators can reduce burdens by using VLANs to group endpoints for nontechnical purposes. For example, they may group devices by department on a single VLAN.

VLANs also have some disadvantages.

A single network segment may host hundreds or thousands of distinct organizations, and each may need hundreds of VLANs. However, there is a limit of 4,096 VLANs per switching domain. Various protocols address this limitation, including network virtualization, Virtual Extensible LAN, and Encapsulation DOT1q in Cisco VLAN configuration. They enable more VLANs to be defined by supporting larger tags.

Another challenge is VLAN identification for AP and wall jack access.

Best Practice VLAN Configuration Recommendations

Learning how to configure VLANs can be complex and time-consuming, but the significant advantages VLANs offer to enterprise networks are often worthwhile.

Configuration of VLAN switches is the first step. Whenever the network configuration is altered, teams must also update switches, switch configurations, or add an additional VLAN.

Second, set up VLAN access control lists (VACLs) to control the access to the VLANs wherever packets enter or exit the VLAN to protect network security. Next, apply command-line interfaces.

Packages that automate and simplify management of VLAN configuration are available from various third party equipment vendors, reducing the likelihood of error. These packages can rapidly reinstall the last working configuration in case of an error based on the complete record of each set of configuration settings they maintain. They also make it simpler to add and remove VLANs, and to understand how to check VLAN configuration and troubleshoot VLAN configurations.

The IEEE 802.1Q standard describes how to identify VLANs with tagging. The beginning of the Ethernet packets show the addresses for source media and destination access control, with the 32-bit VLAN identifier field behind them.

Can you configure VLAN routing on a firewall? Yes, users can define VLANs for single firewalls and clusters of firewalls.

Does Avi Support VLAN Configuration?

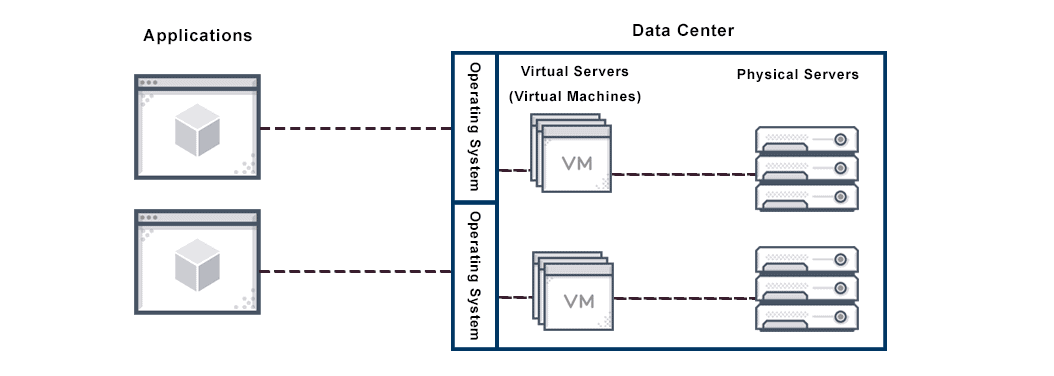

Avi Vantage supports vLAN trunking on bare-metal servers and vLAN interface configuration on Linux server cloud. If the Avi Controller is deployed on a bare-metal server, the individual physical links of the server can be configured to support 802.1q-tagged virtual LANs (vLANs). Each vLAN interface has its own IP address. Multiple vLAN interfaces per physical link are supported.

Learn more about how Avi supports VLANs.

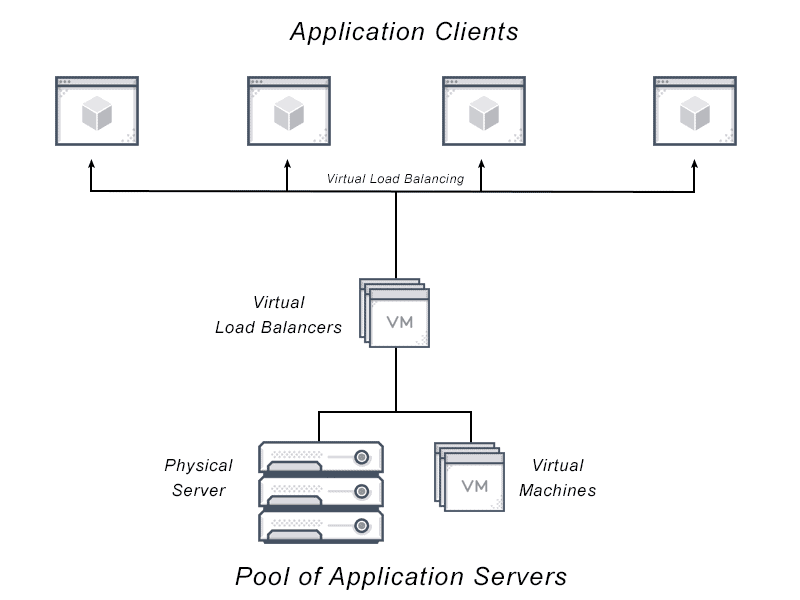

For more on the actual implementation of load balancers, check out our Application Delivery How-To Videos.