Load Balancing Definition

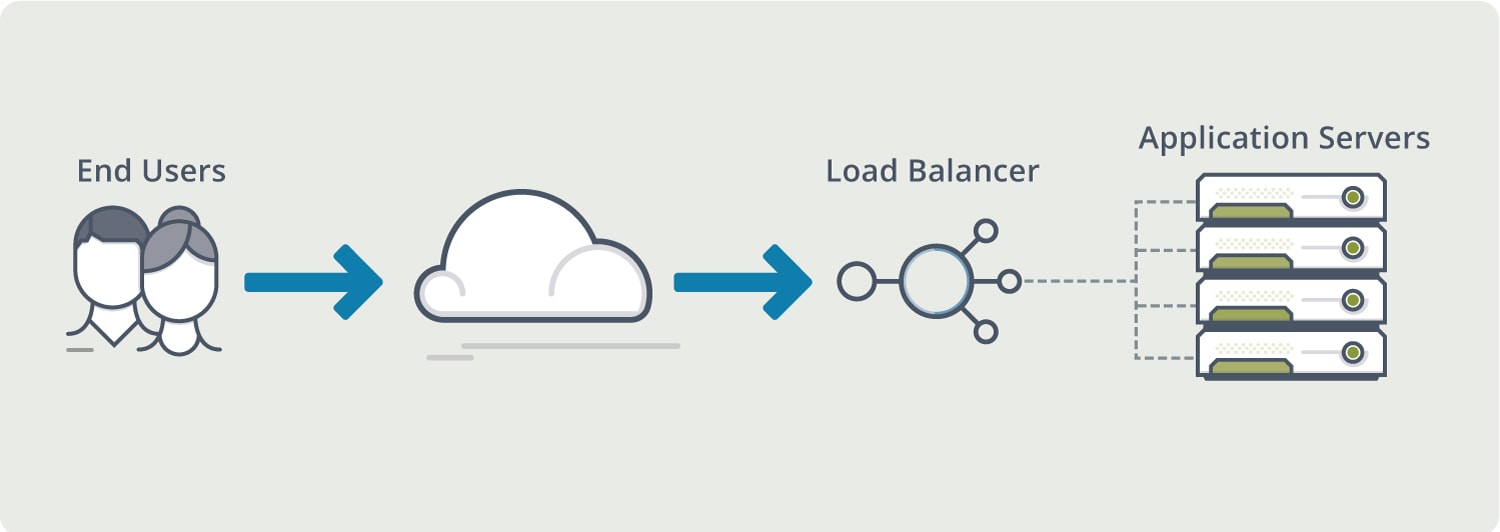

Load Balancing is the process of distributing network traffic across multiple servers. This ensures no single server bears too much demand. By spreading the work evenly, load balancing improves responsiveness. Load balancing methods also increase availability of applications and websites for users. Modern applications cannot run without load balancing.

How Does Load Balancing Work?

When one application server becomes unavailable, load balancing directs all new requests to other available servers. Load balancing can be offered by hardware appliances or software. Hardware appliances often run proprietary software optimized to run on custom processors. As traffic increases, the vendor simply adds more load balancing appliances to handle the volume. Load balancing software usually runs on less-expensive, standard x86 hardware. Installing the software in cloud environments like AWS EC2 eliminates the need for a physical appliance.

What Are Load Balancing Methods?

Load balancing methods include:

- Geographic load balancing

- TCP load balancing

- UDP load balancing

- Multi-site load balancing

- Load balancing-as-a-service

- SDN load balancing

- Global server load balancing

Why Is Load Balancing Important?

Network load balancing can do more than just act as a traffic cop for incoming requests. Load balancing software provides benefits like predictive analytics that determine application traffic bottlenecks before they happen. As a result, software-based load balancing gives an organization actionable insights. These are key to automation and can help drive business decisions.

What Is Load Balancing In Cloud Computing?

Software load balancing provides application services in the cloud. This provides a managed, off-site solution that can draw resources from an elastic network of servers. Cloud computing also allows for the flexibility of hybrid hosted and in-house solutions. Primary load balancing could be in-house while the backup is in the cloud. This ensures high availability of application and web servers.

Load balancing in the cloud has the following benefits:

- Single point of control for distributed load balancing

- Pinpoint analytics and visibility into web application performance

- Predictive autoscaling of balancers, backend servers and applications

- Accelerates application delivery from weeks to minutes

- Troubleshoots app issues visually in minutes for health checks

- Eliminates over-provisioning

What Are Load Balancing Algorithms?

There is a variety of load balancing methods, which use different algorithms best suited for a particular situation.

- Least Connection Method: directs traffic to the server with the fewest active connections. Most useful when there are a large number of persistent connections in the traffic unevenly distributed between the servers.

- Least Response Time Method: directs traffic to the server with the fewest active connections, the fewest send requests and the lowest average response time.

- Round Robin Method: rotates servers by directing traffic to the first available server and then moves that server to the bottom of the queue. Most useful when servers are of equal specification, in a single geographic location and there are not many persistent connections.

- IP Hash: the IP address of the client determines which server receives the request.

Does VMware NSX Advanced Load Balancer Provide Load Balancing?

The VMware NSX Advanced Load Balancer’s platform provides scalable application delivery for any infrastructure. This forms the backbone of VMware NSX Advanced Load Balancer, providing speed, performance and reliability for modern enterprise infrastructures. VMware NSX Advanced Load Balancer provides the following benefits:

- Elastic Load Balancing: Software architecture gives the elasticity needed to support dynamic scale up or scale out of load balancing services.

- Central Control and Automation: Overcome the complexity of application delivery across data centers and public clouds with distributed architecture by centrally managing your application resources.

- Lower Total Cost of Ownership: Outperform legacy hardware with faster provisioning and by eliminating expensive hardware refreshes and management.

- Responsive Self-Service: Allow business units to deploy applications without using central IT resources, increasing responsiveness and reducing support costs.

- Application Analytics and Troubleshooting: Single dashboard displays real-time telemetry for actionable insights and efficient troubleshooting.

For more on the actual implementation of load balancers, check out our Application Delivery How-To Videos.

For more information on load balancing see the following resources: